I am so pleased to be able to announce today the arrival of my new book Print & Pattern Nature published by Laurence King. This is the fifth book in the P&P Series and focuses on design using flowers, leaves, birds, trees, butterflies etc. 101 fantastic designers have kindly submitted their work and given an insight into how they create their works, their inspirations and influences. Just some

Viewing: Blog Posts Tagged with: books, Most Recent at Top [Help]

Results 1 - 25 of 15,205

Blog: print & pattern (Login to Add to MyJacketFlap)

JacketFlap tags: BOOKS, Add a tag

Blog: print & pattern (Login to Add to MyJacketFlap)

JacketFlap tags: BOOKS, PACKAGING, MUGS, ORLA KIELY, KIDS DESIGN, Add a tag

I spent a couple of hours in Bristol last week and managed to take a look at some of the shops in Park Street like 'Rise' and 'Guild' and also popped into the Arnolfini Gallery. I mainly visited book and record stores but still managed to find some inspiring prints and patterns. Here are my snaps from the day featuring mainly book covers, some cards and wrap, and a smattering of homewares.

Blog: print & pattern (Login to Add to MyJacketFlap)

JacketFlap tags: BOOKS, ILLUSTRATION, CHRISTMAS, CARDS, Add a tag

You may remember I posted about the publication of a new book by designer Paul Farrell a few weeks ago. But now I have actually seen the book in the flesh I just had to post again with new pictures as it is just so full of beautiful graphics. Called 'Great Britain in Colour' it is a treasure trove of colour and shape across a whopping 240 pages - just a small sample of which I have snapped

Blog: Blog for the Morbidly Thoughtful (Login to Add to MyJacketFlap)

JacketFlap tags: Books, Travel, History, Writing, Inspiration, Crazy, Novel: A Glimmer of Steel, They Did What?, Add a tag

I was saddened to learn today that Castle Miranda (also known as Château de Noisy) in Belgium was slated to be torn down this month. Back in 2012 I stumbled across the gorgeous pictures from PROJ3CT M4YH3M of this heart-breaking, beautiful, decaying castle. The ceilings especially inspired me to put pen to paper and write the scene in my novel Glimmer of Steel where Jennica comes to terms with her fate while staring up at her bedroom’s ceiling.

Since I don’t own any of the copyrights for the images I saw back in 2012, nor have I paid for licensing rights, I have the next best thing… links to the owners’ sites so you can hop over a view them yourself.

The first link is for a website (in German) with historical photos/drawings of the Castle in its original state. http://www.lipinski.de/noisy-historical/index.php

The second link is from Ian Moone’s and PROJ3CT M4YH3M’s website page that covered their first visit to Castle Miranda in 2012:

Urbex: Castle Miranda aka Château de Noisy Belgium – December 2012 (Part 1)

The third link is from Ian Moone’s and PROJ3CT M4YH3M’s second visit in 2014:

Urbex: Castle Miranda aka Château de Noisy Belgium – May 2014 (revisit)

So just as I’m getting ready to release Glimmer of Steel to Kindle Scout this month, and I’m looking for Castle Miranda pictures to share as an important visual inspiration for my writing, I learned the castle is being dismantled. Pascal Dermien recently photographed the start of the demolition and shared his photos on YouTube. You can see former turrets cast upon the ground, including the weather vane that used to spin atop the highest peak. Only the blogs, and photographs, memories, videos, and the occasional book will live on.

Add a CommentBlog: Elizabeth O. Dulemba (Login to Add to MyJacketFlap)

JacketFlap tags: Books, Add a tag

What a nice surprise! My picture book, Soap, Soap, Soap has been chosen to be included in the monthly books delivered through BOOKCASE.CLUB for Kids. Click the image to learn more.

Blog: print & pattern (Login to Add to MyJacketFlap)

JacketFlap tags: BOOKS, MUGS, TABLEWARE, STATIONERY, WALL ART, TEA TOWELS, STORE SNAPS, RUG DESIGN, Add a tag

Whilst in Selfridges last Friday I found they had an Anthropologie concession so it was a nice chance to snap some of their latest products. Anthropologie have been working with an artist I really admire : Starla Michelle who creates the most beautifully colourful flowers and animals. Her artworks have been used on textiles, plates, wall art and an entire alphabet of pretty monogrammed mugs. I

Blog: JZ ArtBlog (Login to Add to MyJacketFlap)

JacketFlap tags: books, projects, shameless self-promotion, Add a tag

Fun mail today! My new book through Magination Press, "A World of Pausabilities", came today! It is a great book by Frank Sileo about slowing down, reflecting, and being in the moment....something that I need to be reminded of myself. This title will be available in February 2017.

Blog: JZ ArtBlog (Login to Add to MyJacketFlap)

JacketFlap tags: books, shameless self-promotion, illustrators, the life and times of the artist, Add a tag

Thanks go out to Moonbeam Children's Book Awards for awarding "Big Red and the Little Bitty Wolf" a silver medal for the Picture Book Age 4-8 category! The author Jeanie Franz Ransom and I are so excited for this recognition! Thanks also go out to the publisher Magination Press for having me as a part of this great book project!

Blog: TWO WRITING TEACHERS (Login to Add to MyJacketFlap)

JacketFlap tags: book reviews, books, mentor texts, writing workshop, mentor text, Add a tag

Maribeth Boelt’s new book A Bike Like Sergio’s will appeal to readers and writers of all ages. It’s a heartfelt story with a message to which readers will relate; the right decision is… Continue reading ![]()

Blog: JZ ArtBlog (Login to Add to MyJacketFlap)

JacketFlap tags: projects, shameless self-promotion, illustrators, books, illustration, Add a tag

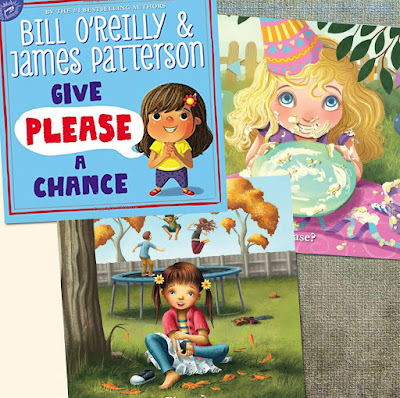

I am excited to announce that my artwork, along with several other illustrators from MB Artists, will be featured in Bill O'Reilly and James Patterson's new book "Give Please a Chance." My illustration is the one shown here at the bottom, with the girl and the trampoline. This title will be released on November 21st.

Blog: Cartoon Brew (Login to Add to MyJacketFlap)

JacketFlap tags: CalArts, Maureen Furniss, Animation Parlour, A New History of Animation, Thames & Hudson, Books, Add a tag

This new animation history textbook is based on the animation history courses that author Maureen Furniss teaches at CalArts.

The post ‘A New History of Animation’ Could Become The Definitive Textbook History of The Art Form appeared first on Cartoon Brew.

Add a CommentBlog: March House Books Blog (Login to Add to MyJacketFlap)

JacketFlap tags: Books, Guest Posts, Milly's Magic Quilt, Murray Natasha, Add a tag

Blog: print & pattern (Login to Add to MyJacketFlap)

JacketFlap tags: BOOKS, ILLUSTRATION, Add a tag

Illustrator, graphic designer and print-maker Paul Farrell's debut book 'Great Britain in Colour', was published on 22 September by Boxtree and Pan Macmillan. The 166 full page colour illustrations are one years' work and the book was completed almost two years from the start. It is a personal journey full of memories and travels from the last 45 years or so. There are hidden gems, familiar

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: Books, Law, television, Media, sexual abuse, child abuse, National Treasure, sex offenders, criminology, *Featured, TV & Film, false allegations, legal trial, Ros Burnett, sex abuse, The press, WASCA, Wrongful Allegations of Sexual and Child Abuse, Add a tag

Many people watching UK television drama National Treasure will have made their minds up about the guilt or innocence of the protagonist well before the end of the series. In episode one we learn that this aging celebrity has ‘slept around’ throughout his long marriage but when an allegation of non-recent sexual assault is made he strenuously denies it.

The post What if they are innocent? Justice for people accused of sexual and child abuse appeared first on OUPblog.

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: Books, Literature, Language, q&a, fish, Oxford University Press, oed, oxford english dictionary, etymology, British, Linguistics, lexicographer, *Featured, oxford dictionaries, Peter Gilliver, Dictionaries & Lexicography, au naturel, aumoniere, chalazion, The Making of the Oxford English Dictionary, twiffler, Add a tag

Peter Gilliver has been an editor of the Oxford English Dictionary since 1987, and is now one of the Dictionary's most experienced lexicographers; he has also contributed to several other dictionaries published by OUP. In addition to his lexicographical work, he has been writing and speaking about the history of the OED for over fifteen years. In this two part Q&A, we learn more about how his passion for lexicography inspired him.

The post Learning about lexicography: A Q&A with Peter Gilliver part 1 appeared first on OUPblog.

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: Books, Music, cello, q&a, author interview, cellist, lincoln center, music teacher, *Featured, Putting it All Together, New York Philharmonic, Alexander Technique, Bow and You, Evangeline Benedetti, NY Phil, Add a tag

What was it like as one of the few female performers in the New York Philharmonic in the 1960s? We sat down with cellist and author Evangeline Benedetti to hear the answer to this and other questions about performance and teaching careers, favorite composers, and life behind the doors of Lincoln Center.

The post In conversation with cellist Evangeline Benedetti appeared first on OUPblog.

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: Books, History, myths, vikings, lord of the rings, Europe, Viking age, runes, Scandinavia, saga, Editor's Picks, *Featured, icelandic saga, old norse, valhalla, beyond the northlands, burial ceremoniesnordic, dragonhead ship, dragonhead ships, eleanor barraclough, horned helmet, ivar the boneless, nordic burials, nordic world, ragnar hairy-breeches, Scandinavian history, viking facts, viking history, viking myths, Add a tag

The viking image has changed dramatically over the centuries, romanticized in the 18th and 19 century, they are now alternatively portrayed as savage and violent heathens or adventurous explorers. Stereotypes and clichés are rampant in popular culture and vikings and their influence appear to various extents, from Wagner's Ring Cycle to the comic Hägar the Horrible, and J.R.R Tolkien's Lord of the Rings to Marvel's Thor. But what is actually true? Eleanor Barraclough lifts the lid on ten common viking myths.

The post 10 myths about the vikings appeared first on OUPblog.

Blog: March House Books Blog (Login to Add to MyJacketFlap)

JacketFlap tags: Books, Giveaways, Add a tag

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: *Featured, Books, Geography, Brazil, atlas, Sweden, Place of the Year, rio, Atlas of the World, The White House, Aleppo, Quizzes & Polls, Election 2016, brexit, The UK, Tristan da Cunha, Add a tag

Quite a lot has happened in 2016. The year has flew by with history making events such as the Brexit, the Presidential election in the United States, and the blockade of Aleppo to name a few.

The post Place of the Year 2016 longlist: vote for your pick appeared first on OUPblog.

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: Books, gothic, Oxford Etymologist, church, German, blessing, word origins, curse, Greek, etymology, anatoly liberman, Linguistics, Anglo-Saxon, cursing, *Featured, Word Origins And How We Know Them, The Oxford Etymologist, Old Enlgish, Add a tag

Curse is a much more complicated concept than blessing, because there are numerous ways to wish someone bad luck. Oral tradition (“folklore”) has retained countless examples of imprecations. Someone might want a neighbor’s cow to stop giving milk or another neighbor’s wife to become barren.

The post Blessing and cursing part 2: curse appeared first on OUPblog.

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: Books, Sociology, Politics, australia, Social Sciences, *Featured, Business & Economics, public servant, british colonies, bureaucracy, Only in Australia, The History Politics and Economics of Australian Exceptionalism, William Coleman, Australian Bureau of Statistics, Battle of Yorktown, colonial regime, Nelson T. Johnson, New World society, officialdom, Add a tag

‘Public Servant’ — in the sense of ‘government employee’ — is a term that originated in the earliest days of the European settlement of Australia. This coinage is surely emblematic of how large bureaucracy looms in Australia. Bureaucracy, it has been well said, is Australia’s great ‘talent,’ and “the gift is exercised on a massive scale” (Australian Democracy, A.F. Davies 1958). This may surprise you. It surprises visitors, and excruciates them.

The post Australia in three words, part 3 — “Public servant” appeared first on OUPblog.

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: toilet, *Featured, Hezekiah, Iron Age, Arts & Humanities, A Geography of Royal Power in the Biblical World, decommissioning, desecration, Jehu, Judah, Lachish, Levantine, religious sacrifice, sacrileges, Stephen C. Russell, The King and the Land, Books, History, Religion, Middle East, israel, altar, Add a tag

In September, the Israel Antiquities Authority made a stunning announcement: at the ancient Judean city of Lachish, second only to Jerusalem in importance, archaeologists have uncovered a shrine in the city’s gate complex with two vandalized altars and a stone toilet in its holiest section. “Holy crap!” I said to a friend when I first read the news.

The post Holy crap: toilet found in an Iron Age shrine in Lachish appeared first on OUPblog.

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: Anti-Federalists, Federalists, The Federalist Papers, infographic, *Featured, 1787, party politics, OUP Infographic, american founders, Michael Klarman, revolutionary america, The Anti-Federalist Papers, The Framer's Coup, The Making of the United States Constitution, Books, History, american history, America, founding fathers, Infographics, Add a tag

Between October 1787 and August 1788, a collection of 85 articles and essays were distributed by the Federalist movement. Authored by Alexander Hamilton, James Madison, and John Jay, The Federalist Papers highlighted the political divisions of their time.

The post Federalists and Anti-Federalists: the founders of America [infographic] appeared first on OUPblog.

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: What Everyone Needs to Know, sexual assault, *Featured, student protests, WENTK, racial inequality, campus politics, Chief Diversity Officer, Jonathan Zimmerman, political protests, undergraduate education, university administrators, university policies, Books, diversity, Education, Politics, Racism, social change, university, affirmative action, Add a tag

Like their forebears in the 1960s, today’s students blasted university leaders as slick mouthpieces who cared more about their reputations than about the people in their charge. But unlike their predecessors, these protesters demand more administrative control over university affairs, not less. That’s a childlike position. It’s time for them to take control of their future, instead of waiting for administrators to shape it.

The post How university students infantilise themselves appeared first on OUPblog.

Blog: OUPblog (Login to Add to MyJacketFlap)

JacketFlap tags: Books, History, Literature, Data, ireland, nineteenth century, Nineteenth Century Literature, irish lit, nineteenth century europe, nineteenth century ireland, ordinance survey, *Featured, big data, Arts & Humanities, european literature, Cóilín Parsons, data science, Irish Literature, Add a tag

Initially, they had envisaged dozens of them: slim booklets that would handily summarize all of the important aspects of every parish in Ireland. It was the 1830s, and such a fantasy of comprehensive knowledge seemed within the grasp of the employees of the Ordnance Survey in Ireland.

The post Big data in the nineteenth century appeared first on OUPblog.

View Next 25 Posts

This looks stunning! Congratulations and Happy Christmas! xx<br />

Looks great!

Thank you so much Jane and Tiffany. I am really pleased with this new book and hope it will be well received. <br /><br />Happy Christmas to you too Jane.

Wow. Your book looks incredible! Great work :-)

Can't wait to get my mits on this!!! My mum is getting it for me for Christmas. Happy Christmas to you!!! X<br />

Thank you so much Jenny and Ollie : )<br />Merry Christmas Jenny hope you will like the book!