new posts in all blogs

Viewing: Blog Posts from the News category, Most Recent at Top [Help]

Results 1 - 25 of 69,914

How to use this Page

You are viewing the most recent posts from blogs in the News category in the JacketFlap blog reader. These posts are sorted by date, with the most recent posts at the top of the page. There are hundreds of new posts here every day on a variety of topics related to children's publishing. Scroll down through the list of Recent Posts in the left column and click on a post title that sounds interesting. Click a tag in the right column to view posts about that topic. You can view all posts from a specific blog by clicking the Blog name in the right column, or you can click a 'More Posts from this Blog' link in any individual post.

Hopefully, someday my contribution to peace

Will help just a bit to turn the tide

And perhaps I can tell my children six

And later on their own children

That at least in the future they need not be silent

When they are asked, "Where was your mother, when?"

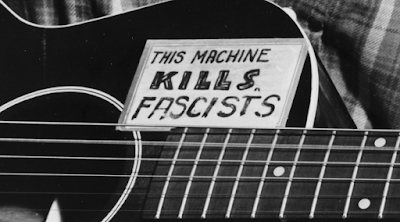

—Pete Seeger, "My Name Is Lisa Kalvelage"

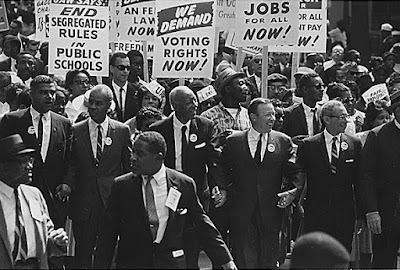

Faculty and grad students at my university are being targeted by right-wing groups who publicize their names and contact information because these faculty and students have criticized racist and sexist acts on campus. The Women's Studies department in particular has been attacked in the state newspaper for the crime of offering supplies to students who were participating in a protest against Donald Trump. The president of our university just sent out an email giving staff and students information about what to do if they are attacked. Numerous students have reported being harassed, spat upon, told they'd be deported, etc.

The right wing detests many segments of academia. The basic idea of women's studies programs, ethnic studies programs, queer studies programs, etc. are anathema to them, but right-wing vitriol is not limited to the humanities — ask

a climate scientist what life is like these days.

These trends are not new, but they are emboldened and concentrated by the success of Donald Trump and the

nazis,

klansmen, and various troglodytes associated with him.

Hate crimes are on the rise. The media, trapped in the ideology of false equivalence, terrified of losing access to people in power, besotted by celebrity, makes white supremacists look like

GQ models and ends up

running headlines questioning if Jews are people. Things will only get worse.

The chilling effect is already strong.

Within the last few days, I've heard from a number of academics (some with tenure) who say they are being very careful. They're changing their social media habits (in some cases, deleting their social media accounts altogether), making themselves less accessible, being careful not to show any political partiality around their students. They need their jobs, after all. They have bills to pay, kids to support, lives to live. Just yesterday, one of my friends was ordered to a meeting with a dean to justify a Tweet from her personal account, a Tweet I had to read three times before I could figure out what in it might ever be construed as "misrepresenting the university". She's got tenure, at least, so she might be safe for now. For now.

This is not to say that anti-Trump or left-leaning faculty ought to be celebrated as always correct and perfect. They're as capable of being incoherent, punitive, and authoritarian in their views as Trump is, and a few of the things some of my friends and colleagues said are things I completely disagree with. But they're human beings at a highly anxious moment expressing their views. They're not sending hate mail or harassing people, yet now they are targets of hate and harassment campaigns and many have pulled back, hidden, or deleted whatever they could of their public presence. These are wonderful people, teachers, and scholars. We need to hear their voices. We need them not to be strangled by fear. But they're scared.

Most teaching faculty in the U.S. don't have tenure, and tenure is often weak. The neoliberalization of academia has seen to that, and there's little reason to believe things will get better. The

Wisconsin model is one the Republicans hope to make national, and with control of all levels of federal government and a majority of state governments, that goal is within their reach.

The

series of moral panics that have been spreading through academia — and spreading even more in the discourse around and about academia — is effective in its work, and it is especially effective now in the Age of Trump.

"Political correctness" is a powerful term within the discourse, one that the right-wing uses skillfully to stifle dissent and to create a new common sense that is more favorable to the right's perspective. State universities like mine are especially vulnerable to the interference of politicians, even though at this point we get so little funding from the state that the term barely applies anymore. The triumph of the right wingers means institutions susceptible to political pressure will adapt to the new rulers.

Moves toward fascism may seem inimical to academia, but they're not at all.

Universities can adapt.

I don't have any original or even particularly insightful answers to this stuff. I've been reading lots of

Stuart Hall,

Audre Lorde, and

Victor Klemperer. I sent money to organizations committed to standing against Trump and fascism. I'm

matching my publisher's fundraiser for the ACLU. I'm adding

Parable of the Sower to the readings in my First-Year Composition course in the spring. I stuck a pink triangle pin on my backpack; I hadn't done so for many

years, but it feels important now to be visible.

I've got a lot less to lose than many of my friends and colleagues. (I'm a white male for one thing. White supremacist patriarchy lets us get away with more.) I'll protest, I'll speak out, I'll risk what I can see my way to risk.

Back in the good olde days of George W. Bush, I was a columnist for a local newspaper. My assignment was to be the resident left-winger. My first column ran just before the attacks of September 11, 2001. In March of 2002, I began a column with the sentence, "If George W. Bush really wants to clean up politics in this country, the best thing he could do would be to resign." I was a young teacher at a private high school, and readers of the newspaper called the headmaster to try to get me fired. He liked me and had no desire to fire me. He encouraged people who called him to give me a call (my phone number was public), since, he told them, I'm a reasonable guy. "Not one of them would," he said to me a week or so later. "They're cowards. And good for you for speaking up. You and I don't agree about everything, but so what? Why would I ever want to have a teacher here who wasn't truthful to himself? Write what you need to write." A couple readers wrote relatively polite letters to the editor denouncing me for my lack of patriotism. (Now they'd just send an email in ALL CAPS and filled with abusive epithets and maybe a couple death threats for good measure. Those were simpler days...)

I kept at it for a while, and indeed eventually got a few phone calls, and now and then somebody wrote to the school to complain about a person with such unpatriotic and subversive ideas teaching the young and impressionable children, and once a deacon came to the school to speak with me between classes because he thought I needed God. I wasn't especially attached to writing those columns; it was just something that seemed like it needed doing, and I didn't have many other writing opportunities at the time. Eventually, I gave it up for blogging.

I don't think my words changed anybody's minds, I don't think I particularly helped anybody through writing those columns, and I am certain that I could have done more, risked more, donated more, written more. But I look back on it all now with some pride, because lots of people who later said they didn't support the catastrophic U.S. wars in Iraq and Afghanistan really did at the time. It's easy to forget now how much cultural pressure there was after 9/11 to toe the line, to keep your radical ideas to yourself, to not make waves, not buck the trends or upset the ship of state. People said we had to support the President even if we didn't agree with him. People said we needed to be united, even if it meant being united around murder, destruction, and hatred. Some of us disagreed, and the proof of my own stand remains in the pages of the

Record Enterprise of Plymouth, NH fifteen years ago.

There are many forces in my life right now saying it would be prudent for me not to associate my own name with seemingly radical beliefs or actions. I don't agree. I will not support Donald Trump or his minions. I will continue to compare them to Hitler and the Nazis as long as their actions and words continue to

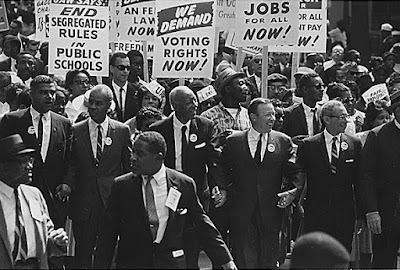

evoke fascism. I will continue to advocate for an academia that seeks ways to shed the neoliberalism that has so corrupted the academic mission, and I will stand with students and faculty and staff who are marginalized, oppressed, precarious. Solidarity forever.

I will do my damnednest not to risk collaboration with oppression. As an American citizen, it's impossible not to have some complicity in the wars and weaponry our taxes pay for, or in the many ways our lifestyles destroy the biosphere, or — well, the list of atrocities we contribute to is long, and the whole country was founded on the sins of genocide and slavery, sins still repercussing at the present moment. I know this. I'm not seeking to hide the double/triple/infinite binds except to say there are some things we do not need to go along with, even as deep in the swamp of complicity as we may be.

It's important to pay attention to attacks and to chilling effects, important to make your own position known, to try hard not to collaborate with a regime of repression. We do what we can, and protect what we must. Some people can only resist quietly. Others will put their lives on the line. What matters is the resistance.

What matters is being able to stand tall and answer honorably when the future

asks, "Where were you, when...?"

My publisher, Black Lawrence Press,

has announced that for every book they sell through their website from now through the end of the year, they will donate $1 to the American Civil Liberties Union.

I will match this for my own book,

Blood: Stories, meaning that every copy sold through the BLP website will also send $2 to the ACLU.

I'm an ACLU member, and pleased with this choice of an organization to support because so many of BLP's authors are among the groups targeted by harassment, civil rights violations, and hate crimes — all of which are

on the rise and likely to continue rising.

In the archives of the

New York Times, materials about Germany and the rise of the Nazis to power are vast. It would take days to read through it all. Though it would be an informative experience, I don't have the time to do so at the moment, but I was curious to see the general progression of news and opinion as it all happened.

Here are a few items that stuck out to me as I skimmed around:

19327 February

10 March

29 May

12 June

1933

8 February

9 February

29 February

5 March

7 March

11 March

12 March

13 March

16 March

19 March

22 March

Electric Literature has published an essay I wrote about Robert Aickman, one of the greatest of the 20th century's short story writers:

Thirty-five years after his death, Robert Aickman is beginning to receive the attention he deserves as one of the great 20th century writers of short fiction. For the first time, new editions of his books are plentiful, making this a golden age for readers who appreciate the uniquely unsettling effect of his work.

Unsettling is a key description for Aickman’s writing, not merely in the sense of creating anxiety, but in the sense of undoing what has been settled: his stories unsettle the ideas you bring to them about how fictional reality and consensus reality should fit together. The supernatural is never far from the surreal. He was drawn to ghost stories because they provided him with conventions for unmaking the conventional world, but he was about as much of a traditional ghost story writer as Salvador Dalí was a typical designer of pocket watches.

Continue reading at Electric Literature.

For more of me on Aickman,

see this post about my favorite of his stories, "The Stains".

By: ErinF,

on 10/21/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

History,

Politics,

Fidel Castro,

America,

nuclear war,

cold war,

Soviet Union,

CIA,

john f. kennedy,

Cuban Missile Crisis,

OBO,

*Featured,

Nikita Khrushchev,

Online products,

Oxford Bibliographies,

Bay of Pigs,

Cuban-American relations,

jonathan colman,

October 1962,

Add a tag

The Cuban Missile Crisis was a six-day public confrontation in October 1962 between the United States and the Soviet Union over the presence of Soviet strategic nuclear missiles in Cuba. It ended when the Soviets agreed to remove the weapons in return for a US agreement not to invade Cuba and a secret assurance that American missiles in Turkey would be withdrawn. The confrontation stemmed from the ideological rivalries of the Cold War.

The post The Cuban missile crisis appeared first on OUPblog.

By: Heather Saunders,

on 10/21/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Books,

Law,

television,

Media,

sexual abuse,

child abuse,

National Treasure,

sex offenders,

criminology,

*Featured,

TV & Film,

false allegations,

legal trial,

Ros Burnett,

sex abuse,

The press,

WASCA,

Wrongful Allegations of Sexual and Child Abuse,

Add a tag

Many people watching UK television drama National Treasure will have made their minds up about the guilt or innocence of the protagonist well before the end of the series. In episode one we learn that this aging celebrity has ‘slept around’ throughout his long marriage but when an allegation of non-recent sexual assault is made he strenuously denies it.

The post What if they are innocent? Justice for people accused of sexual and child abuse appeared first on OUPblog.

By: KatherineS,

on 10/21/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

History,

war,

Technology,

military history,

guns,

weapons,

Very Short Introductions,

gunpowder,

*Featured,

Alex Roland,

carbon age,

chemical age,

George Armstrong Custer,

history of technology,

Little Big Horn,

Maxim gun,

Norbert Elias,

War and Technology: A Very Short Introduction,

Add a tag

Few inventions have shaped history as powerfully as gunpowder. It significantly altered the human narrative in at least nine significant ways. The most important and enduring of those changes is the triumph of civilization over the “barbarians.” That last term rings discordant in the modern ear, but I use it in the original Greek sense to mean “not Greek” or “not civilized.” The irony, however, is not that gunpowder reduced violence.

The post The irony of gunpowder appeared first on OUPblog.

By: Carolyn Napolitano,

on 10/20/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Books,

Literature,

Language,

q&a,

fish,

Oxford University Press,

oed,

oxford english dictionary,

etymology,

British,

Linguistics,

lexicographer,

*Featured,

oxford dictionaries,

Peter Gilliver,

Dictionaries & Lexicography,

au naturel,

aumoniere,

chalazion,

The Making of the Oxford English Dictionary,

twiffler,

Add a tag

Peter Gilliver has been an editor of the Oxford English Dictionary since 1987, and is now one of the Dictionary's most experienced lexicographers; he has also contributed to several other dictionaries published by OUP. In addition to his lexicographical work, he has been writing and speaking about the history of the OED for over fifteen years. In this two part Q&A, we learn more about how his passion for lexicography inspired him.

The post Learning about lexicography: A Q&A with Peter Gilliver part 1 appeared first on OUPblog.

By: Celine Aenlle-Rocha,

on 10/20/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Books,

Music,

cello,

q&a,

author interview,

cellist,

lincoln center,

music teacher,

*Featured,

Putting it All Together,

New York Philharmonic,

Alexander Technique,

Bow and You,

Evangeline Benedetti,

NY Phil,

Add a tag

What was it like as one of the few female performers in the New York Philharmonic in the 1960s? We sat down with cellist and author Evangeline Benedetti to hear the answer to this and other questions about performance and teaching careers, favorite composers, and life behind the doors of Lincoln Center.

The post In conversation with cellist Evangeline Benedetti appeared first on OUPblog.

By: Kim Behrens,

on 10/20/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Books,

History,

myths,

vikings,

lord of the rings,

Europe,

Viking age,

runes,

Scandinavia,

saga,

Editor's Picks,

*Featured,

icelandic saga,

old norse,

valhalla,

beyond the northlands,

burial ceremoniesnordic,

dragonhead ship,

dragonhead ships,

eleanor barraclough,

horned helmet,

ivar the boneless,

nordic burials,

nordic world,

ragnar hairy-breeches,

Scandinavian history,

viking facts,

viking history,

viking myths,

Add a tag

The viking image has changed dramatically over the centuries, romanticized in the 18th and 19 century, they are now alternatively portrayed as savage and violent heathens or adventurous explorers. Stereotypes and clichés are rampant in popular culture and vikings and their influence appear to various extents, from Wagner's Ring Cycle to the comic Hägar the Horrible, and J.R.R Tolkien's Lord of the Rings to Marvel's Thor. But what is actually true? Eleanor Barraclough lifts the lid on ten common viking myths.

The post 10 myths about the vikings appeared first on OUPblog.

Dawn again,

and I switch off the light.

On the table a tattered moth

shrugs its wings.

I agree.

Nothing is ever quite

what we expect it to be.

—Robert Dunn

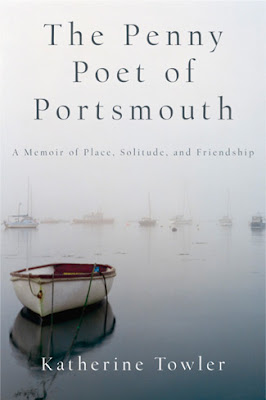

Katherine Towler's deeply affecting and thoughtful portrait of Robert Dunn is subtitled "A Memoir of Place, Solitude, and Friendship". It's an accurate label, but one of the things that makes the book such a rewarding reading experience is that it's a memoir of struggles with place, solitude, and friendship — struggles that do not lead to a simple Hallmark card conclusion, but rather something far more complex. This is a story that could have been told superficially, sentimentally, and with cheap "messages" strewn like sugarcubes through its pages. Instead, it is a book that honors mysteries.

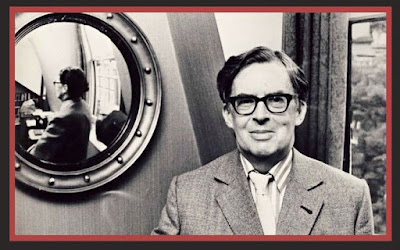

You are probably not familiar with the poetry of

Robert Dunn, nor even his name, unless you happen to live or have lived in or around Portsmouth, New Hampshire. Even then, you may not have noticed him. He was Portsmouth's second

poet laureate, and an important figure within the Portsmouth poetry scene from the late 1970s to his death in

2008. But he only published a handful of poems in literary journals, and his chapbooks were printed and distributed only locally — and when he sold them himself, he charged 1 cent. (Towler tells a story of trying to pay him more, which proved impossible.) He was insistently local, insistently uncommercial.

|

| Robert Dunn |

Dunn was also about as devoted to his writing as a person could be. When Towler met him in the early '90s, he lived in a single room in an old house, owned almost nothing, and made what little money he made from working part-time at the

Portsmouth Athenaeum. He seemed to live on cigarettes and coffee. When Towler first saw him around town, she, like probably many other people, thought he was homeless.

If a person could start a conversation with Dunn, which wasn't always easy, they would discover that he was very well read and eloquent. He was not, though, effusive, and he was deeply private. It was only late in his life, wracked with lung disease, that he opened up to anyone, and the person he seems to have opened up most to is Towler, though even then, she was able to learn very little about his past.

Towler got to know him because she was a neighbor, because she was intrigued by him, and because she was a writer on a different career track, with different ambitions. Dunn certainly wanted his poems to be read — he wouldn't have made chapbooks and sold them (even if only for a penny) if not — but he didn't want to subject himself to the quest-for-fame machine, he didn't want to do what everybody now says you must do if you want to have a successful writing career: become a "brand". He didn't bother with any sort of copyright, and Towler quotes a disclaimer from one of his books: "1983 and no nonsense about copyright. When I wrote these things they belonged to me. When you read them they belong to you. And perhaps one other." In 1999, he told a reporter from the

Boston Globe, "It just feels kind of silly posting no trespassing signs on my poems."

His motto, written on slips of paper he occasionally gave to people, was "Minor poets have more fun."

When Towler met him, she was struggling with writing a novel. She had lived peripatetically for a while, but had recently gotten married. She wanted to be published far and wide, and in her most honest moments she probably would have admitted that she would have liked to sell millions of copies of her books, get a great movie deal or two, get interviewed by Terry Gross and Charlie Rose, win a Pulitzer and a National Book Award and, heck, maybe even a Nobel. Success.

Once her first novel was published, Towler discovered what many people do in their first experiences with publication: It doesn't fill the hole. It is never enough. There are always other books selling better, getting more or better reviews, always other writers with more money and fame, and in American book culture these days, once a book is a month or two old, it's past its expiration date and rarely of much interest to booksellers, reviewers, or the public, because there's always something new, new, new to grab attention. You might as well sell your poems for a penny in Market Square.

By the time Towler's first novel is published, Portsmouth is deep in the process of gentrification. Not only have the local market, five-and-dime store, and hardware store been put out of business by rising rents and big box stores, but all seven of the downtown bookstores have disappeared. (Downtown is no longer bookstoreless. Bookstores, at least, can appeal to the gentrifiers, though it's still tough to be profitable with the cost of the rent what it is.) Towler gives a reading at a Barnes & Noble out at the mall, a perfect symbol of pretty much everything Robert Dunn had lived his life against: a mall, a chain bookstore. He attends the reading, though, and seems happy for Towler's success. He stands in line to get his book signed, and then says something that cuts Towler to the bone: "I'll let you get to your public."

As Robert turned away, leaving me to face a line of people waiting to have books signed, I felt that he had seen through me to the deep need for approval, the great strivings of ego, that lay at the heart of my desire to publish a book. Only by divorcing myself from the hunger for affirmation, his quip suggested, would I find what I truly desired. This is what he wanted for me, what he wanted for anyone who wrote.

The Penny Poet of Portsmouth continues to think through these ideas, and to work through the contrast between Dunn's fierce localism and solitude and Towler's own conflicting desires, hopes, dreams, and fears. She begins in great admiration of Dunn, who seems to have sacrificed absolutely everything to his writing and has somehow overcome any yearning for fame or reward. In that sense, he seems saintly.

But at what cost? This question is always in the background as Dunn becomes ill and more dependent on other people. If he wants to stay alive, the sort of solitude he cultivated and cherished is no longer possible, leading to some difficult confrontations and tensions.

Towler does her best not to impose her own values on Dunn's life, but she can't help wondering what it would be like to live as Dunn does. Though she sympathizes with him, and shares some of his ideals, she's no ascetic. She can't help but think of Dunn as lonely, since she would be, especially in illness. She cherishes her husband, she likes the house they buy together, she enjoys (at least sometimes) traveling to readings and leading writing workshops.

Dunn's life, as Towler presents it at least, shows the inadequacy of the question, "Are you happy?" In illness, Dunn was obviously not comfortable, and when he couldn't be at home, he was often not content. But happiness is a fleeting thing, not a state of being. Even if you believe that happiness is something that can be captured for more than a moment, there's no reason to think that, except for illness, Robert Dunn was

unhappy. He sculpted a life for himself that was, it seems, quite close to whatever life he might have desired having. I doubt having a life partner of some sort would have led to more moments of happiness for him, as Towler wonders at one point. The accommodations and annoyances of having another person around a lot of the time are insufferable for someone inclined toward solitude. Was this the case for Dunn? It's difficult to know, because he was difficult to know. Towler is remarkably restrained, I think, in not trying to impose her own pleasures on Dunn — she doesn't ever record saying to him what the partnered often say to the unpartnered, "Wouldn't you be happy with someone else in your life?" (I often think partnered people work a bit too hard to justify their own life choices, as if the presence of other types of lives are somehow an indictment of their own.) But even still, it does seem to be one of the more unbridgeable gulfs between types of people: the contentedly partnered seem as terrified of being unpartnered as the contentedly unpartnered are of being partnered, and so one looks on the other's life as a nightmare.

What

The Penny Poet of Portsmouth shows is the necessity of community. Robert Dunn was, for many reasons, lucky that he lived in Portsmouth when he did, because there was a real community of writers and people interested in writing and reading, and these people looked out for each other. Before the rents went whacko, it was possible to live in Portsmouth if you weren't rich. This is why the word

place in Towler's subtitle is so important. The book is not only a portrait of Robert Dunn, but a chronicle of the city that allowed him to be Robert Dunn. Towler is careful to chronicle the details of the place and community, both its people and its institutions. She shows the frustrations of dealing with bureaucracies of illness, and without being heavy-handed she depicts the ways that poverty is perceived in a place as ruggedly individualistic as New Hampshire — once she gets to know Robert Dunn, she also gets to see, feel, and struggle against the assumptions people who don't know him make. (In some of what she described him experiencing from nurses, doctors, and pharmacists, I couldn't help but think of my father, who did not have health insurance and who died of congestive heart failure. After he had a heart attack, he felt patronized and dismissed by hospitals that knew he couldn't pay his bills. Whether the hospital employees themselves actually felt this way, I don't know, but he perceived it deeply, and it contributed to his determination never to see another doctor or hospital. I'll forever remember the way his voice sounded when he told me, briefly, of this: the shattered pride, the humiliation, the rage all fraying his words.)

In its attention to the details of community — and a number of other ways —

The Penny Poet of Portsmouth makes a perfect companion to Samuel Delany's

Dark Reflections, another story of a poet, though in a very different environment. Arnold Hawley in Delany's novel is far less content than Robert Dunn in Towler's memoir, but their commitments are similar. Arnold is more seduced by the desire for fame and success than Dunn seems to have been, and so his personality is perhaps a bit more like Towler's, but without her relative success in everyday living. Having known Dunn, Towler is perhaps more capable of imagining Dunn as content in his life than Delany is quite able to imagine Arnold content — I've sometimes wondered whether Arnold is so tormented by his life because Delany himself would be tormented by that life, a life that is the opposite, in nearly every way, of his own. No matter. The books have much to say to (and with) each other.

There is much in Towler's memoir that is moving. She takes her time and doesn't rush the story. At first, I thought perhaps her pacing was unnecessarily slow, perhaps padding out what was a thin tale. I was wrong, though, because Towler is up to many things in the book, and needs her portrait of the place and person to be built carefully for her ideas and concerns to have resonance and real meaning. It's a tale of growing into knowledge and into something like intimacy, but also, at the same time, of coming to terms with unsolveable mysteries and uncrossable borders.

By the time I got to the fragmentary biography Towler offers as an appendix, those few pages were the most powerful of the book for me, because they show just how much we can't know. (I suppose the power was also one of some sort of personal recognition: Robert Dunn grew up only a dozen or so miles from where I grew up. The soil of his early years is as familiar to me as any other place on Earth. He was a graduate of the University of New Hampshire, where I finished my undergraduate degree and am now working on a Ph.D. I've only spent occasional time in Portsmouth, though enough over the decades to have seen some of the changes Towler writes about.) Primed by all the rest of Towler's story, it was this paragraph that most deeply affected me:

He went south in the summer of 1965 with the civil rights movement with a small group of Episcopalians from New Hampshire. Jonathan Daniels, who was shot and killed as he protected a young African-American woman attempting to enter a store in Hayneville, Alabama, had gone down ahead of the group. Robert would not talk about this experience, though others and I asked him about it directly. He did say once that he had known Daniels.

Had Towler rushed her story more, the resonances and mysteries in that paragraph wouldn't have been nearly as affecting. (It helps if you already know the story of Daniels, I suppose.)

The book ends with a delightful collection of what we might call "Robert Dunn's wit and wisdom", taken from various interviews and profiles in local papers that Dunn had stuck in a folder labeled "Vanity". Of the civil rights movement, he told Clare Kittredge of the

Boston Globe in 1999: "We had our hearts thoroughly broken."

Let's end these musings with what mattered most to Robert Dunn, though: the poetry. His poems remind me of

Frank O'Hara (whom he discusses with Towler) and

Samuel Menashe. They're colloquial and compressed, full of surprises. Thanks to Towler's book, I expect, Dunn's selected poems have finally been collected in

One of Us Is Lost, and a few are reprinted in

The Penny Poet. Here's one that seems particularly appropriate:

Public Notice

They've taken away the pigeon lady,

who used to scatter breadcrumbs from an old

brown hand and then do a little pigeon dance,

right there on the sidewalk, with a flashing

of purple socks. To the scandal of the

neighborhood. This is no world for pigeon

ladies.

There's a certain wild gentleness in

this world that holds it all together. And

there's a certain tame brutality that just

naturally tends to ruin and scatteration and

nothing left over. Between them it's a very

near thing. This is no world without pigeon

ladies.

Now world, I know you're almost

uglied out, but . . . just think! Try to

remember: What have you done with the

pigeon lady?

—Robert Dunn

Dawn again,

and I switch off the light.

On the table a tattered moth

shrugs its wings.

I agree.

Nothing is ever quite

what we expect it to be.

—Robert Dunn

Katherine Towler's deeply affecting and thoughtful portrait of Robert Dunn is subtitled "A Memoir of Place, Solitude, and Friendship". It's an accurate label, but one of the things that makes the book such a rewarding reading experience is that it's a memoir of struggles with place, solitude, and friendship — struggles that do not lead to a simple Hallmark card conclusion, but rather something far more complex. This is a story that could have been told superficially, sentimentally, and with cheap "messages" strewn like sugarcubes through its pages. Instead, it is a book that honors mysteries.

You are probably not familiar with the poetry of

Robert Dunn, nor even his name, unless you happen to live or have lived in or around Portsmouth, New Hampshire. Even then, you may not have noticed him. He was Portsmouth's second

poet laureate, and an important figure within the Portsmouth poetry scene from the late 1970s to his death in

2008. But he only published a handful of poems in literary journals, and his chapbooks were printed and distributed only locally — and when he sold them himself, he charged 1 cent. (Towler tells a story of trying to pay him more, which proved impossible.) He was insistently local, insistently uncommercial.

|

| Robert Dunn |

Dunn was also about as devoted to his writing as a person could be. When Towler met him in the early '90s, he lived in a single room in an old house, owned almost nothing, and made what little money he made from working part-time at the

Portsmouth Athenaeum. He seemed to live on cigarettes and coffee. When Towler first saw him around town, she, like probably many other people, thought he was homeless.

If a person could start a conversation with Dunn, which wasn't always easy, they would discover that he was very well read and eloquent. He was not, though, effusive, and he was deeply private. It was only late in his life, wracked with lung disease, that he opened up to anyone, and the person he seems to have opened up most to is Towler, though even then, she was able to learn very little about his past.

Towler got to know him because she was a neighbor, because she was intrigued by him, and because she was a writer on a different career track, with different ambitions. Dunn certainly wanted his poems to be read — he wouldn't have made chapbooks and sold them (even if only for a penny) if not — but he didn't want to subject himself to the quest-for-fame machine, he didn't want to do what everybody now says you must do if you want to have a successful writing career: become a "brand". He didn't bother with any sort of copyright, and Towler quotes a disclaimer from one of his books: "1983 and no nonsense about copyright. When I wrote these things they belonged to me. When you read them they belong to you. And perhaps one other." In 1999, he told a reporter from the

Boston Globe, "It just feels kind of silly posting no trespassing signs on my poems."

His motto, written on slips of paper he occasionally gave to people, was "Minor poets have more fun."

When Towler met him, she was struggling with writing a novel. She had lived peripatetically for a while, but had recently gotten married. She wanted to be published far and wide, and in her most honest moments she probably would have admitted that she would have liked to sell millions of copies of her books, get a great movie deal or two, get interviewed by Terry Gross and Charlie Rose, win a Pulitzer and a National Book Award and, heck, maybe even a Nobel. Success.

Once her first novel was published, Towler discovered what many people do in their first experiences with publication: It doesn't fill the hole. It is never enough. There are always other books selling better, getting more or better reviews, always other writers with more money and fame, and in American book culture these days, once a book is a month or two old, it's past its expiration date and rarely of much interest to booksellers, reviewers, or the public, because there's always something new, new, new to grab attention. You might as well sell your poems for a penny in Market Square.

By the time Towler's first novel is published, Portsmouth is deep in the process of gentrification. Not only have the local market, five-and-dime store, and hardware store been put out of business by rising rents and big box stores, but all seven of the downtown bookstores have disappeared. (Downtown is no longer bookstoreless. Bookstores, at least, can appeal to the gentrifiers, though it's still tough to be profitable with the cost of the rent what it is.) Towler gives a reading at a Barnes & Noble out at the mall, a perfect symbol of pretty much everything Robert Dunn had lived his life against: a mall, a chain bookstore. He attends the reading, though, and seems happy for Towler's success. He stands in line to get his book signed, and then says something that cuts Towler to the bone: "I'll let you get to your public."

As Robert turned away, leaving me to face a line of people waiting to have books signed, I felt that he had seen through me to the deep need for approval, the great strivings of ego, that lay at the heart of my desire to publish a book. Only by divorcing myself from the hunger for affirmation, his quip suggested, would I find what I truly desired. This is what he wanted for me, what he wanted for anyone who wrote.

The Penny Poet of Portsmouth continues to think through these ideas, and to work through the contrast between Dunn's fierce localism and solitude and Towler's own conflicting desires, hopes, dreams, and fears. She begins in great admiration of Dunn, who seems to have sacrificed absolutely everything to his writing and has somehow overcome any yearning for fame or reward. In that sense, he seems saintly.

But at what cost? This question is always in the background as Dunn becomes ill and more dependent on other people. If he wants to stay alive, the sort of solitude he cultivated and cherished is no longer possible, leading to some difficult confrontations and tensions.

Towler does her best not to impose her own values on Dunn's life, but she can't help wondering what it would be like to live as Dunn does. Though she sympathizes with him, and shares some of his ideals, she's no ascetic. She can't help but think of Dunn as lonely, since she would be, especially in illness. She cherishes her husband, she likes the house they buy together, she enjoys (at least sometimes) traveling to readings and leading writing workshops.

Dunn's life, as Towler presents it at least, shows the inadequacy of the question, "Are you happy?" In illness, Dunn was obviously not comfortable, and when he couldn't be at home, he was often not content. But happiness is a fleeting thing, not a state of being. Even if you believe that happiness is something that can be captured for more than a moment, there's no reason to think that, except for illness, Robert Dunn was

unhappy. He sculpted a life for himself that was, it seems, quite close to whatever life he might have desired having. I doubt having a life partner of some sort would have led to more moments of happiness for him, as Towler wonders at one point. The accommodations and annoyances of having another person around a lot of the time are insufferable for someone inclined toward solitude. Was this the case for Dunn? It's difficult to know, because he was difficult to know. Towler is remarkably restrained, I think, in not trying to impose her own pleasures on Dunn — she doesn't ever record saying to him what the partnered often say to the unpartnered, "Wouldn't you be happy with someone else in your life?" (I often think partnered people work a bit too hard to justify their own life choices, as if the presence of other types of lives are somehow an indictment of their own.) But even still, it does seem to be one of the more unbridgeable gulfs between types of people: the contentedly partnered seem as terrified of being unpartnered as the contentedly unpartnered are of being partnered, and so one looks on the other's life as a nightmare.

What

The Penny Poet of Portsmouth shows is the necessity of community. Robert Dunn was, for many reasons, lucky that he lived in Portsmouth when he did, because there was a real community of writers and people interested in writing and reading, and these people looked out for each other. Before the rents went whacko, it was possible to live in Portsmouth if you weren't rich. This is why the word

place in Towler's subtitle is so important. The book is not only a portrait of Robert Dunn, but a chronicle of the city that allowed him to be Robert Dunn. Towler is careful to chronicle the details of the place and community, both its people and its institutions. She shows the frustrations of dealing with bureaucracies of illness, and without being heavy-handed she depicts the ways that poverty is perceived in a place as ruggedly individualistic as New Hampshire — once she gets to know Robert Dunn, she also gets to see, feel, and struggle against the assumptions people who don't know him make. (In some of what she described him experiencing from nurses, doctors, and pharmacists, I couldn't help but think of my father, who did not have health insurance and who died of congestive heart failure. After he had a heart attack, he felt patronized and dismissed by hospitals that knew he couldn't pay his bills. Whether the hospital employees themselves actually felt this way, I don't know, but he perceived it deeply, and it contributed to his determination never to see another doctor or hospital. I'll forever remember the way his voice sounded when he told me, briefly, of this: the shattered pride, the humiliation, the rage all fraying his words.)

In its attention to the details of community — and a number of other ways —

The Penny Poet of Portsmouth makes a perfect companion to Samuel Delany's

Dark Reflections, another story of a poet, though in a very different environment. Arnold Hawley in Delany's novel is far less content than Robert Dunn in Towler's memoir, but their commitments are similar. Arnold is more seduced by the desire for fame and success than Dunn seems to have been, and so his personality is perhaps a bit more like Towler's, but without her relative success in everyday living. Having known Dunn, Towler is perhaps more capable of imagining Dunn as content in his life than Delany is quite able to imagine Arnold content — I've sometimes wondered whether Arnold is so tormented by his life because Delany himself would be tormented by that life, a life that is the opposite, in nearly every way, of his own. No matter. The books have much to say to (and with) each other.

There is much in Towler's memoir that is moving. She takes her time and doesn't rush the story. At first, I thought perhaps her pacing was unnecessarily slow, perhaps padding out what was a thin tale. I was wrong, though, because Towler is up to many things in the book, and needs her portrait of the place and person to be built carefully for her ideas and concerns to have resonance and real meaning. It's a tale of growing into knowledge and into something like intimacy, but also, at the same time, of coming to terms with unsolveable mysteries and uncrossable borders.

By the time I got to the fragmentary biography Towler offers as an appendix, those few pages were the most powerful of the book for me, because they show just how much we can't know. (I suppose the power was also one of some sort of personal recognition: Robert Dunn grew up only a dozen or so miles from where I grew up. The soil of his early years is as familiar to me as any other place on Earth. He was a graduate of the University of New Hampshire, where I finished my undergraduate degree and am now working on a Ph.D. I've only spent occasional time in Portsmouth, though enough over the decades to have seen some of the changes Towler writes about.) Primed by all the rest of Towler's story, it was this paragraph that most deeply affected me:

He went south in the summer of 1965 with the civil rights movement with a small group of Episcopalians from New Hampshire. Jonathan Daniels, who was shot and killed as he protected a young African-American woman attempting to enter a store in Hayneville, Alabama, had gone down ahead of the group. Robert would not talk about this experience, though others and I asked him about it directly. He did say once that he had known Daniels.

Had Towler rushed her story more, the resonances and mysteries in that paragraph wouldn't have been nearly as affecting. (It helps if you already know the story of Daniels, I suppose.)

The book ends with a delightful collection of what we might call "Robert Dunn's wit and wisdom", taken from various interviews and profiles in local papers that Dunn had stuck in a folder labeled "Vanity". Of the civil rights movement, he told Clare Kittredge of the

Boston Globe in 1999: "We had our hearts thoroughly broken."

Let's end these musings with what mattered most to Robert Dunn, though: the poetry. His poems remind me of

Frank O'Hara (whom he discusses with Towler) and

Samuel Menashe. They're colloquial and compressed, full of surprises. Thanks to Towler's book, I expect, Dunn's selected poems have finally been collected in

One of Us Is Lost, and a few are reprinted in

The Penny Poet. Here's one that seems particularly appropriate:

Public Notice

They've taken away the pigeon lady,

who used to scatter breadcrumbs from an old

brown hand and then do a little pigeon dance,

right there on the sidewalk, with a flashing

of purple socks. To the scandal of the

neighborhood. This is no world for pigeon

ladies.

There's a certain wild gentleness in

this world that holds it all together. And

there's a certain tame brutality that just

naturally tends to ruin and scatteration and

nothing left over. Between them it's a very

near thing. This is no world without pigeon

ladies.

Now world, I know you're almost

uglied out, but . . . just think! Try to

remember: What have you done with the

pigeon lady?

—Robert Dunn

By: Lizzie Furey,

on 10/19/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

*Featured,

Books,

Geography,

Brazil,

atlas,

Sweden,

Place of the Year,

rio,

Atlas of the World,

The White House,

Aleppo,

Quizzes & Polls,

Election 2016,

brexit,

The UK,

Tristan da Cunha,

Add a tag

Quite a lot has happened in 2016. The year has flew by with history making events such as the Brexit, the Presidential election in the United States, and the blockade of Aleppo to name a few.

The post Place of the Year 2016 longlist: vote for your pick appeared first on OUPblog.

By: Lizzie Furey,

on 10/19/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Books,

gothic,

Oxford Etymologist,

church,

German,

blessing,

word origins,

curse,

Greek,

etymology,

anatoly liberman,

Linguistics,

Anglo-Saxon,

cursing,

*Featured,

Word Origins And How We Know Them,

The Oxford Etymologist,

Old Enlgish,

Add a tag

Curse is a much more complicated concept than blessing, because there are numerous ways to wish someone bad luck. Oral tradition (“folklore”) has retained countless examples of imprecations. Someone might want a neighbor’s cow to stop giving milk or another neighbor’s wife to become barren.

The post Blessing and cursing part 2: curse appeared first on OUPblog.

By: Eleanor Jackson,

on 10/19/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Books,

Sociology,

Politics,

australia,

Social Sciences,

*Featured,

Business & Economics,

public servant,

british colonies,

bureaucracy,

Only in Australia,

The History Politics and Economics of Australian Exceptionalism,

William Coleman,

Australian Bureau of Statistics,

Battle of Yorktown,

colonial regime,

Nelson T. Johnson,

New World society,

officialdom,

Add a tag

‘Public Servant’ — in the sense of ‘government employee’ — is a term that originated in the earliest days of the European settlement of Australia. This coinage is surely emblematic of how large bureaucracy looms in Australia. Bureaucracy, it has been well said, is Australia’s great ‘talent,’ and “the gift is exercised on a massive scale” (Australian Democracy, A.F. Davies 1958). This may surprise you. It surprises visitors, and excruciates them.

The post Australia in three words, part 3 — “Public servant” appeared first on OUPblog.

By: Cassandra Gill,

on 10/19/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

History,

American Revolution,

America,

George Washington,

This Day in History,

Europe,

Alexander Hamilton,

Yorktown,

*Featured,

Online products,

War of American Independence,

Battle of Yorktown,

Admiral Sir George Rodney,

Battle of the Saintes,

Benjamin Lincoln,

Charles O’Hara,

Comte de Rochambeau,

French navy,

Hamilton Yorktown,

Lord Cornwallis,

Siege of Yorktown,

Sir Henry Clinton,

Add a tag

The surrender of Lord Cornwallis’s British army at Yorktown, Virginia, on 19 October 1781 marked the effective end of the War of American Independence, at least in North America. The victory is usually assumed to have been Washington’s; he led the army that besieged Cornwallis, marching a powerful force of 16,000 troops down from near New York City to oppose the British. Charles O’Hara, The presence of the young Alexander Hamilton, one of Washington’s aides-de-camp, who led a light infantry unit in the final stages of the siege, adds to the sense of its being a great American triumph.

The post The French Victory at Yorktown: 19 October 1781 appeared first on OUPblog.

By: Heather Smith,

on 10/19/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

toilet,

*Featured,

Hezekiah,

Iron Age,

Arts & Humanities,

A Geography of Royal Power in the Biblical World,

decommissioning,

desecration,

Jehu,

Judah,

Lachish,

Levantine,

religious sacrifice,

sacrileges,

Stephen C. Russell,

The King and the Land,

Books,

History,

Religion,

Middle East,

israel,

altar,

Add a tag

In September, the Israel Antiquities Authority made a stunning announcement: at the ancient Judean city of Lachish, second only to Jerusalem in importance, archaeologists have uncovered a shrine in the city’s gate complex with two vandalized altars and a stone toilet in its holiest section. “Holy crap!” I said to a friend when I first read the news.

The post Holy crap: toilet found in an Iron Age shrine in Lachish appeared first on OUPblog.

By: Marissa Lynch,

on 10/18/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Anti-Federalists,

Federalists,

The Federalist Papers,

infographic,

*Featured,

1787,

party politics,

OUP Infographic,

american founders,

Michael Klarman,

revolutionary america,

The Anti-Federalist Papers,

The Framer's Coup,

The Making of the United States Constitution,

Books,

History,

american history,

America,

founding fathers,

Infographics,

Add a tag

Between October 1787 and August 1788, a collection of 85 articles and essays were distributed by the Federalist movement. Authored by Alexander Hamilton, James Madison, and John Jay, The Federalist Papers highlighted the political divisions of their time.

The post Federalists and Anti-Federalists: the founders of America [infographic] appeared first on OUPblog.

By: Amelia Carruthers,

on 10/18/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

global issues,

millennium development goals,

Social Sciences,

*Featured,

religious fundamentalism,

Online products,

world politics,

refugee crisis,

2008 financial crisis,

brexit,

A Dictionary of Contemporary World History,

Christopher Riches,

History,

Politics,

global warming,

World,

civil war,

Sudan,

Syria,

nationalism,

catalonia,

oxford reference online,

Add a tag

Over the past 30 years, I have worked on many reference books, and so am no stranger to recording change. However, the pace of change seems to have become more frantic in the second decade of this century. Why might this be? One reason, of course, is that, with 24-hour news and the internet, information is transmitted at great speed. Nearly every country has online news sites which give an indication of the issues of political importance.

The post Why is the world changing so fast? appeared first on OUPblog.

By: Estefania Ospina,

on 10/17/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

*Featured,

20th Century Literature,

Classics & Archaeology,

Henry Green,

NYRB classics,

Arts & Humanities,

Birmingham factory,

Bright Young Yorkes,

British aristocracy,

celebration of Henry Green,

Doting,

Duchess of York,

fiction and non-fiction prose,

Nick Shepley,

novelists of the 20th century,

Literature,

Oxford,

blindness,

Add a tag

Henry Green is renowned for being a “writer’s-writer’s writer” and a “neglected” author. The two, it would seem, go hand in hand, but neither are quite true. This list of reasons to read Henry Green sets out to loosen the inscrutability of the man and his work.

The post You have to read Henry Green appeared first on OUPblog.

By: Cassidy Donovan,

on 10/17/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

What Everyone Needs to Know,

sexual assault,

*Featured,

student protests,

WENTK,

racial inequality,

campus politics,

Chief Diversity Officer,

Jonathan Zimmerman,

political protests,

undergraduate education,

university administrators,

university policies,

Books,

diversity,

Education,

Politics,

Racism,

social change,

university,

affirmative action,

Add a tag

Like their forebears in the 1960s, today’s students blasted university leaders as slick mouthpieces who cared more about their reputations than about the people in their charge. But unlike their predecessors, these protesters demand more administrative control over university affairs, not less. That’s a childlike position. It’s time for them to take control of their future, instead of waiting for administrators to shape it.

The post How university students infantilise themselves appeared first on OUPblog.

By: John Priest,

on 10/17/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Books,

History,

Literature,

Data,

ireland,

nineteenth century,

Nineteenth Century Literature,

irish lit,

nineteenth century europe,

nineteenth century ireland,

ordinance survey,

*Featured,

big data,

Arts & Humanities,

european literature,

Cóilín Parsons,

data science,

Irish Literature,

Add a tag

Initially, they had envisaged dozens of them: slim booklets that would handily summarize all of the important aspects of every parish in Ireland. It was the 1830s, and such a fantasy of comprehensive knowledge seemed within the grasp of the employees of the Ordnance Survey in Ireland.

The post Big data in the nineteenth century appeared first on OUPblog.

By: Eleanor Jackson,

on 10/17/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Social Sciences,

*Featured,

Business & Economics,

chinese economy,

state owned enterprises,

Yu Zhou,

global markets,

China as an Innovation Nation,

China Railroad Corporation,

high speed railway,

Huawei,

Tencent,

Books,

china,

beijing,

shanghai,

entrepreneurs,

innovation,

Intellectual property rights,

Shenzhen,

Add a tag

The writing is on the wall: China is the world second largest economy and the growth rate has slowed sharply. The wages are rising, so that the fabled army of Chinese cheap labor is now among the most costly in Asian emerging economies. China, in the last thirty years has brought hundreds of millions of people out of poverty, but this miracle would stall unless China can undertake another transformation of becoming an innovation nation.

The post The transition of China into an innovation nation appeared first on OUPblog.

By: Begina Slawinska,

on 10/17/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

*Featured,

international law,

environmental law,

JEL,

Journal of Environmental Law,

brexit,

environmental accountability,

EU Commission,

EU governance,

Maria Lee,

Law,

UK,

Journals,

Add a tag

Civil society will be preoccupied in the years to come with ensuring the maintenance of environmental standards formerly set by EU environmental law. This blog provides some thoughts on the less visible aspects of EU environmental governance, aspects that must be held up to scrutiny as we develop an accountability framework ‘independent’ of the rules and institutions of the European Union.

The post Brexit: environmental accountability and EU governance appeared first on OUPblog.

By: Anna Shannon,

on 10/16/2016

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Books,

parenting advice,

child nutrition,

infant health,

Nutrition for Developing Countries,

Nutrition for Developing Countries Third Edition,

baby development,

baby Nutrition,

care group,

first 1000 days,

Jennifer N. Nielsen,

pregnancy nutrition,

motherhood,

parenting,

child development,

nutrition,

pregnancy,

Pediatrics,

*Featured,

Science & Medicine,

Health & Medicine,

Ann Burgess,

1000 days,

Add a tag

Nowadays we use the term ‘first ‘1000 days’ to mean the time between conception and a child’s second birthday. We know that providing good nutrients and care during this period are key to child development and giving a baby the optimum start in life.

The post The first 1000 days appeared first on OUPblog.

View Next 25 Posts