new posts in all blogs

Viewing: Blog Posts Tagged with: rights, Most Recent at Top [Help]

Results 1 - 21 of 21

How to use this Page

You are viewing the most recent posts tagged with the words: rights in the JacketFlap blog reader. What is a tag? Think of a tag as a keyword or category label. Tags can both help you find posts on JacketFlap.com as well as provide an easy way for you to "remember" and classify posts for later recall. Try adding a tag yourself by clicking "Add a tag" below a post's header. Scroll down through the list of Recent Posts in the left column and click on a post title that sounds interesting. You can view all posts from a specific blog by clicking the Blog name in the right column, or you can click a 'More Posts from this Blog' link in any individual post.

By: KatherineS,

on 9/11/2015

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

History,

democracy,

American Revolution,

slavery,

anniversary,

VSI,

rights,

British,

citizens,

Very Short Introductions,

monarchy,

*Featured,

magna carta,

VSI online,

Arts & Humanities,

Habeas Corpus,

10 things you need to know,

Nicholas Vincent,

Add a tag

This year marks the 800th anniversary of one of the most famous documents in history, the Magna Carta. Nicholas Vincent, author of Magna Carta: A Very Short Introduction , tells us 10 things everyone should know about the Magna Carta.

The post 10 things you need to know about the Magna Carta appeared first on OUPblog.

By: DanP,

on 7/10/2014

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Books,

Law,

Videos,

legislation,

Current Affairs,

freedom of expression,

Multimedia,

rights,

melbourne,

collins,

matthew,

confidentiality,

defamation,

*Featured,

individual rights,

defamation law,

Collins on Defamation,

defamation sct 2013,

dr matthew collins,

Lord Neuberger,

neuberger,

vkudax8dvog,

y4qx9ln0bde,

abbotsbury,

Add a tag

Freedom of expression is a central tenet of almost every modern society. This freedom however often comes into conflict with other rights, and can be misused and exploited. New media – especially on the internet – and new forms of media intrusion bring added complexity to old tensions between the individual’s rights to reputation and privacy on the one hand, and freedom of expression and the freedom of the press on the other.

How should free speech be balanced with the right to reputation? This question lies at the heart of defamation law. In the following videos, Lord Neuberger and Dr Matthew Collins QC discuss current challenges in defamation law, and the implications of recent changes to legislation enacted in the Defamation Act 2013. Lord Neuberger highlights urgent issues including privacy, confidentiality, data protection, freedom of information, and the Internet.

In this video, he draws attention to recent high-profile events such as the Leveson Inquiry and the phone-hacking trials, and points up key features of the new legislation.

Click here to view the embedded video.

Dr Matthew Collins QC outlines his perspective on the likely long-term impact of the 2013 Act.

Click here to view the embedded video.

The Rt Hon the Lord Neuberger of Abbotsbury Kt PC is President of the Supreme Court of the United Court of the United Kingdom. Dr Matthew Collins QC is a barrister based in Melbourne, Australia. He is also a Senior Fellow at the University of Melbourne, a door tenant at One Brick Court chambers in London, and the author of Collins on Defamation.

Subscribe to the OUPblog via

email or

RSS.

Subscribe to only law articles on the OUPblog via

email or

RSS.

The post Free speech, reputation, and the Defamation Act 2013 appeared first on OUPblog.

By: Alice,

on 1/22/2013

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

History,

US,

social justice,

Current Affairs,

rights,

abortion,

pro-life,

pro-choice,

Roe v. Wade,

partisan politics,

binghamton,

reproductive rights,

*Featured,

Law & Politics,

How Sex Became a Civil Liberty,

Leigh Ann Wheeler,

moral majority,

reproductive freedom,

“abortion,

“quickening,

Add a tag

By Leigh Ann Wheeler

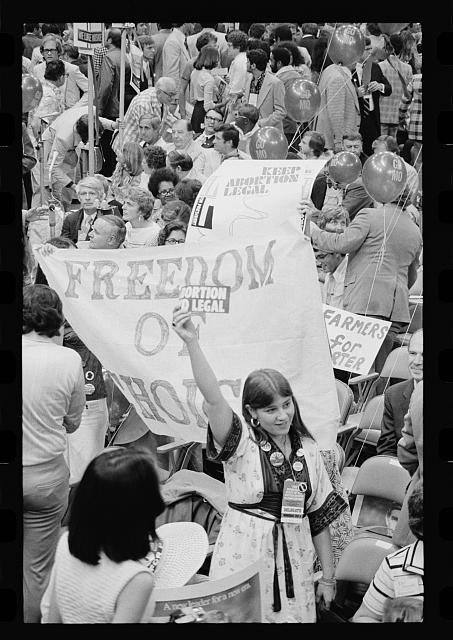

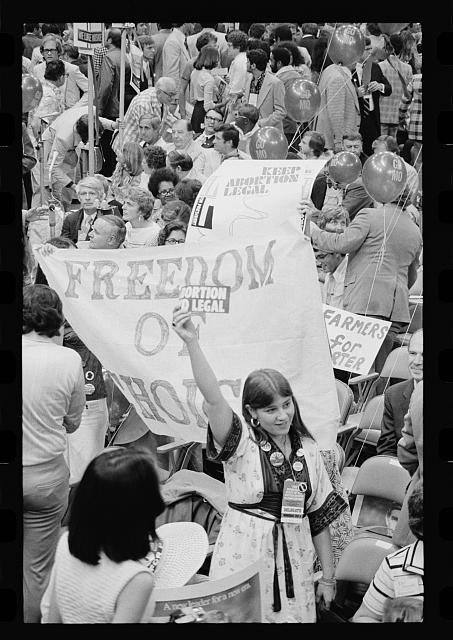

Demonstration protesting anti-abortion candidate Ellen McCormack at the Democratic National Convention, New York City. Photo by Warren K. Leffler, 14 July 1976. Source: Library of Congress.

“My Body, My Choice!”

“Abortion Rights, Social Justice, Reproductive Freedom!”

Such are today’s arguments for upholding Roe v. Wade, whose fortieth birthday many of us are celebrating.

Others are mourning.

“It’s a child, not a choice!”

“Abortion kills children!”

“Stop killing babies!”

How did we arrive at this stunningly polarized place in our discussion — our national shouting match — over women’s reproductive rights?

Certainly it wasn’t always this way. Indeed, consensus and moderation on the issue of abortion has been the rule until recently.

Even if we go back to biblical times, the brutal and otherwise misogynist law of the Old Testament made no mention of abortion, despite popular use of herbal abortifacients at the time. Moreover, it did not treat a person who caused a miscarriage as a murderer. Fast-forward several thousand years to North American indigenous societies where women regularly aborted unwanted pregnancies. Even Christian Europeans who settled in their midst did not prohibit abortion, especially before “quickening,” or the appearance of fetal movement. Support for restrictions on abortion emerged only in the 1800s, a time when physicians seeking to gain professional status sought control over the procedure. Not until the twentieth century did legislation forbidding all abortions begin to blanket the land.

What happened during those decades to women with unwanted pregnancies is well documented. For a middle-class woman, a nine-month “vacation” with distant relatives, a quietly performed abortion by a reputable physician, or, for those without adequate support, a “back-alley” job; for a working-class woman, nine months at a home for unwed mothers, a visit to a back-alley butcher, or maybe another mouth to feed. Women made do, sometimes by giving their lives, one way or another.

But not until the 1950s did serious challenges to laws against abortion emerge. They began to gain a constitutional foothold in the 1960s, when the Planned Parenthood Federation of America and the American Civil Liberties Union (ACLU) persuaded the US Supreme Court to declare state laws that prohibited contraceptives in violation of a newly articulated right to privacy. By the 1970s, the notion of a right to privacy actually cut many ways, but on January 23, 1973, it cut straight through state criminal laws against abortion. In Roe, the Supreme Court adopted the ACLU’s claim that the right to privacy must “encompass a woman’s decision whether or not to terminate her pregnancy.” But the Court also permitted intrusion on that privacy according to a trimester timetable that linked a woman’s rights to the stage of her pregnancy and a physician’s advice; as the pregnancy progressed, the Court allowed the state’s interest in preserving the woman’s health or the life of the fetus to take over.

Roe actually returned the country to an abortion law regime not so terribly different from the one that had reigned for centuries if not millennia before the nineteenth century. The first trimester of a pregnancy, or the months before “quickening,” remained largely under the woman’s control, though not completely, given the new role of the medical profession. The other innovation was that women’s control now derived from a constitutional right to privacy — a right made meaningful only by the availability and affordability of physicians willing to perform abortions.

With these exceptions, the Supreme Court’s decision in Roe did little more than return us to an older status quo. So why has it left us screaming at each other over choices and children, rights and murder?

There are many answers to this question, but a major one involves partisan politics.

On the eve of Roe, to be a Catholic was practically tantamount to being a Democrat. Moreover, feminists were as plentiful in the Republican Party as they were in the Democratic Party. Not so today, on the eve of Roe’s fortieth birthday. Why?

As the Catholic Church cemented its position against abortion and feminists embraced abortion rights as central to a women’s rights agenda, politicians saw an opportunity to poach on their opponent’s constituency and activists saw an opportunity to hitch their fortunes to one of the two major parties. In the 1970s, Paul Weyrich, the conservative activist who coined the phrase “moral majority,” urged Republicans to adopt a pro-life platform in order to woo Catholic Democrats. More recently, the 2012 election showed us Republican candidates who would prohibit all abortions — at all stages of a pregnancy and even in cases of rape and incest — and a proudly, loudly pro-choice Democratic Party.

In the past forty years, abortion has played a major role in realigning our major political parties, associating one with conservative Christianity and the other with women’s rights — a phenomenon that has contributed to the emergence of a twenty-point gender gap, the largest in US history. Perhaps, then, it is no surprise that we are screaming at each other.

Leigh Ann Wheeler is Associate Professor of History at Binghamton University. She is co-editor of the Journal of Women’s History and the author of How Sex Became a Civil Liberty and Against Obscenity: Reform and the Politics of Womanhood in America, 1873-1935.

Subscribe to the OUPblog via email or RSS.

Subscribe to only American history articles on the OUPblog via email or RSS.

The post Choices and rights, children and murder appeared first on OUPblog.

There has been a lot of great writing about copyright and access to our cultural and intellectual history in the weeks since Aaron Swartz’s death. I have been retreading some of my old favorite haunts to see if there was stuff I didn’t know about the status of access to online information especially in the public domain (pre-1923 in the US) era.

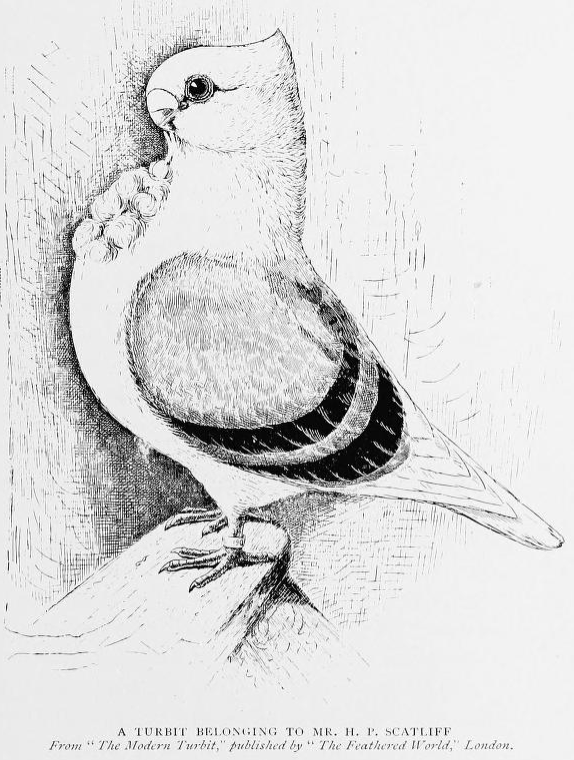

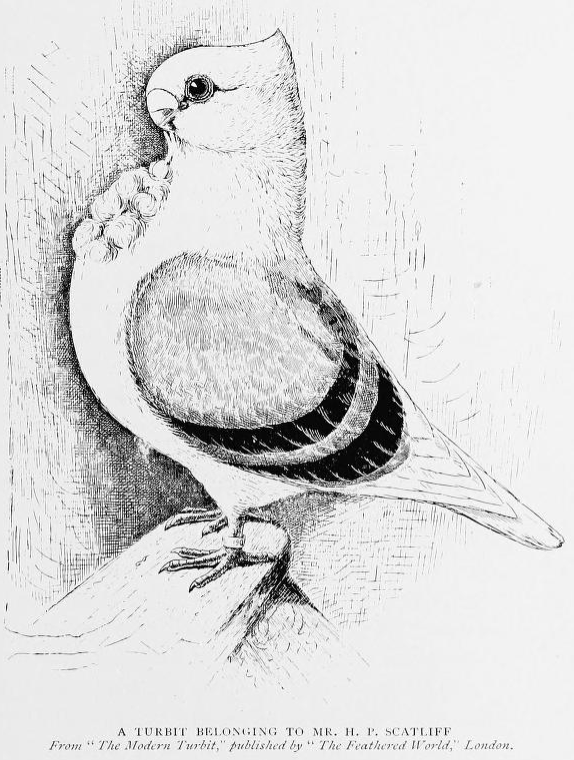

I talk like a broken record about how I think the best thing that libraries can do, academic libraries in particular, is to make sure that their public domain content is as freely accessible as possible. This is an affirmative decision that Cornell University made in 2009 and I think it was the right decision at the right time and that more libraries should do this. Some backstory on this.

So, if I wanted to share an image from a book that Cornell has made available, I have to check the guidelines link above and then I can link to the image, you can go see it and then you can link to the image and do whatever you want with it, including sell it. This is public domain. The time and money that went into making a digital copy of this image have been borne by the Internet Archive and Cornell University. The rights page on the item itself (which I can download in a variety of formats) is clear and easy to understand.

So, if I wanted to share an image from a book that Cornell has made available, I have to check the guidelines link above and then I can link to the image, you can go see it and then you can link to the image and do whatever you want with it, including sell it. This is public domain. The time and money that went into making a digital copy of this image have been borne by the Internet Archive and Cornell University. The rights page on the item itself (which I can download in a variety of formats) is clear and easy to understand.

Compare and contrast JSTOR. Now let me be clear, I am aware that JSTOR is a (non-profit) business and Cornell is a university and I am not saying that JSTOR should just make all of their public domain things free for everyone (though that would be nice), I am just outlining the differences as I see them in accessing content there. I had heard that there were a lot of journals on JSTOR that were freely available even to unaffiliated people like myself. I decided to go looking for them. I found two different programs, the Register and Read program (where registered users can access a certain number of JSTOR documents for free) and the Early Journal Content program. There’s no front door, that I saw, to the EJC program you have to search JSTOR first and then limit your search to “only content I can access” Not super-intuitive, but okay. And I’m not trying to be a pill, but doing a search on the about.jstor.org site for “public domain” gets you zero results though the same is true when searching for “early journal content” and also for “librarian.” Actually, I get the same results when I search their site for JSTOR. Something is broken, I have written them an email.

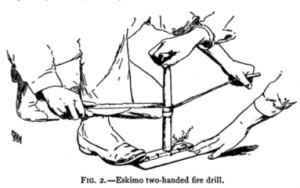

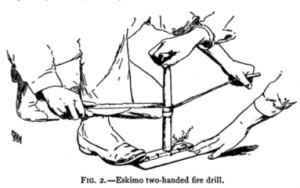

So I go to JSTOR and do a similar search, looking for only “content I can access” and pick up the first thing that’s pre-1923 which is an article about Aboriginal fire making from American Anthropologist in 1890. I click through and agree to the Terms of Service which is almost 9000 words long. Only the last 260 words really apply to EJC. Basically I’ve agreed to use it non-commercially (librarian.net accepts no advertising, I an in the clear) and not scrape their content with bots or other devices. I’ve also seemingly acquiesced to credit them and to use the stable URL, though that doesn’t let me deep-link to the page with the image on it, so I’ve crossed my fingers and deep-linked anyhow. I’m still not sure what I would do, contact JSTOR I guess, if I wanted to use this document in a for-profit project. Being curious, I poked around to see if I could find this public domain document elsewhere and sure enough, I could.

So I go to JSTOR and do a similar search, looking for only “content I can access” and pick up the first thing that’s pre-1923 which is an article about Aboriginal fire making from American Anthropologist in 1890. I click through and agree to the Terms of Service which is almost 9000 words long. Only the last 260 words really apply to EJC. Basically I’ve agreed to use it non-commercially (librarian.net accepts no advertising, I an in the clear) and not scrape their content with bots or other devices. I’ve also seemingly acquiesced to credit them and to use the stable URL, though that doesn’t let me deep-link to the page with the image on it, so I’ve crossed my fingers and deep-linked anyhow. I’m still not sure what I would do, contact JSTOR I guess, if I wanted to use this document in a for-profit project. Being curious, I poked around to see if I could find this public domain document elsewhere and sure enough, I could.

At that point, I quit looking. I found a copy that was free to use. This, however, meant that I had to be good at searching, quite persistent and not willing to take “Maybe” as an answer to “Can I use this content?” I know that when I was writing my book my publishers would not have taken maybe for an answer, they were not even that thrilled to take Wikimedia Commons’ public domain assertions.

As librarians, I feel we have to be prepared to find content that is freely usable for our patrons, not just content that is mostly freely usable or content where people are unlikely to come after you. As much as I’m personally okay being a test case for some sort of “Yeah I didn’t read all 9000 words on the JSTOR terms and conditions, please feel free to take me to jail” case, realistically that will not happen. Realistically the real threat of jail is scary and terrible and expensive. Realistically people bend and decide it’s not so bad because they think it’s the best they can do. I think we can probably do better than that.

Following Scott's excellent post on Banned Books Week, I wanted to add my personal experience regarding this topic.

I was born in Argentina at the peak of the last military dictatorship, in 1977. The society in which I was born and raised was oppressed for years until the people united against tyranny and said "Nunca Mas," Never Again. When I was young, there were a lot of things that weren't available to me and the rest of the population. Some of them were books, music, and theater. Whatever made it to the public was dubbed in Spanish with all the consequences this brings. The message was diluted to what a small group of people thought it was okay for society. In fact, it wasn't until I was in my twenties that I read Little Women in English for the first time and discovered that several paragraphs and whole chapters had been deleted from the translated version I had memorized as a child. I felt like I had been hit in the stomach by a futbol going a hundred miles an hour (and I have in real life. I know that feeling very well)

Among other things, I had never even heard of The Hobbit or of the Lord of Rings Trilogy. When I arrived at BYU, one of the first things I did was go to the library. I was overwhelmed by the amount of books that the walls and countless shelves of not one floor, but five! I could have stayed there forever and never go to class. In fact, if I never stepped in a classroom but was allowed to spend as much time as I wanted in that library, I would have been satisfied.

Fortunately, I did go to class; one of the first ones was an English honors in which we discussed The Lord of the Rings. I remember the very first quizz. I studied for hours, unused to the difficult language of the book (English is my second language after all, but Tolkien's wasn't the English I had studied for years).

I was dismayed when I read the questions. I had no idea who Bilbo was, and there were five questions on this character. When I complained to the professor, he said he had included questions from The Hobbit, and since it was popular culture we all should know it.

I still disagree with his logic, although it makes sense in a way. Eventually I did very well in that class, and I think the professor had a reality check: not all students came from the same background and culture, and as a consequence defined popular culture a little different from him.

Where I'm going with this is, no small group of people has the right to say what I am allowed to consider writing/reading/seeing/saying. During the military dictatorship countless artists were exiled from Argentina because their work was deemed revolutionary, anti-patriotic.

When I was in high school I had the blessing of being friends with a group of girls who, like me, loved reading and discussing the ideas we read. We borrowed and lent books to each other, and we talked. There were many books I read that I didn't like. But I could read them, or put them away if I didn't want to continue giving my time to something I didn't enjoy. Yesterday, I was reading a Stephen King's book in English for the first time, and I reached a passage that really disturbed me because of the violence. I put it away. Do I think no one should ever read that book? No. Everyone has the right to read whatever they please. My son is almost twelve, and he's a read-a-holic like his mother. However, there are some books I don't want him to read yet. There is plenty of time for some things. But when he's old enough, they'll be available for him.

Sometimes when we read these now so popular dystopian books, we as readers are horrified by some aspects of those fictional societies. I'm horrified because I lived in one, and the effects of the lack of freedom TO THINK are devastating. My country still hasn't recovered.

Read books. Banned or not. Think for yourself. Give others the same right.

Naomi Cartwright has always loved stories (although she’s often tempted to read endings first). It was no surprise to her family that she did an English Studies degree at the University of Nottingham before moving to London to work in Children’s Publishing. Naomi is now a Senior Rights Executive at Hachette Children’s Books and has previously worked at Puffin and Orion. She also writes short

By: GraemeNeill,

on 9/22/2011

Blog:

Schiel & Denver Book Publishers Blog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Home,

booksellers,

Agents,

publishers,

digital,

Rights,

Benedicte Page,

Anthony Goff,

Association of Authors Agents,

Add a tag

Agents who wish to publish their clients’ work must offer them a detailed explanation of what they will personally gain from the arrangement and obtain their full and written agreement, the president of the Association of Authors Agents has said.

read more

Doubleday has bought two books by a debut Spanish crime author, with the first set in contemporary Barcelona.

Editorial director Jane Lawson has acquired world English language rights from Mondadori Spain to the two titles by Antonio Hill.

read more

Egmont has won the publishing contract for the latest preschool series to run on CBeebies, Baby Jake, which launched in July and which is expected to be the next big children’s hit.

Baby Jake was developed by Darrall Macqueen and combines storytelling, live action and 2D photographic animation and is focused on two young siblings.

Since launching on 4 July, Baby Jake has been a permanent fixture in the CBeebies iPlayer ‘Top Five’ and grew from 240,000 viewings in week one to a half a million viewings in week two.

read more

By: Editorial Anonymous,

on 1/19/2011

Blog:

Editorial Anonymous

(

Login to Add to MyJacketFlap)

JacketFlap tags:

rights,

Add a tag

I had a picture book published in 2006 which is now out of print and the rights have been returned to me. Is it okay to submit this to other publishers, and if yes, then when is it okay to do this? And if I can submit this do I mention its previous publication? Thanks for your help.

Yes, you mention its previous publication. The editor will find out anyway when she does her acquisition research, and she will be pissed if you've failed to tell her this yourself.

Here's the thing about books that have gone out of print: most of them are out of print for a very, very good reason. It may be a painful reason, and it may be a reason that makes no sense to you, but it is still a GOOD reason: NOT ENOUGH PEOPLE WERE WILLING TO BUY IT.

If this is the reason that your book is out of print, then no publisher is going to bring it back into print within a couple of decades of its original publication. If this is

not the reason your book is out of print, then be very clear in your submission to other publishers about what you think the real reason is. Be clear, and be convincing, because you're fighting a counter argument from the market, and publishers listen to the market.

By: Editorial Anonymous,

on 1/17/2011

Blog:

Editorial Anonymous

(

Login to Add to MyJacketFlap)

JacketFlap tags:

rights,

Add a tag

In 2006 I had a mid-grade novel accepted for publication and the publisher and I agreed a sequel would be a good idea so I got onto writing that and submitted this at the beginning of 2008. The first book came out late 2008 and the sequel was scheduled for 2009. Then the recession hit and the publisher reduced their list and pulled the plug on the sequel. I get regular queries from readers about when the second book is coming. This year I discussed the possibility of getting both books published overseas and the publisher returned international rights to me while retaining local regional rights to the first book. Now I want to query both books to the US and UK but I’m not quite sure how to go about this. Do I send/query the first book in its final form or as a manuscript?

Depends on whether you think its published Australian form does the book proud in the US market. Some Aussie publications do, and some don't. Sometimes I see books published in foreign countries and the cover style is so far off from what would work for us here that it inspires a strongly negative reaction even though I know that this reaction is irrational and unfair to the book. If you don't think its published presentation is stunning, send it as a manuscript and include a page with its Aussie cover and publishing info. Be prepared to answer the question "Why didn't the Australian publisher submit this to us for foreign rights?" In fact, do you know that the Australian publisher

didn't? Generally we don't like being sent the same thing we said 'no' to a year ago by someone else.

If they were interested would publishers keep it in its first published form?

Unlikely, but possible.

Am I doomed? Is there hope? I would greatly appreciate any advice you could give me on this non-run-of-the-mill problem.

With the economy in the state it is, there's a little more doom running around than there used to be, but no, you're not out of the race yet. Still, this is going to be tough going, so be sure you want to spend this effort on this book, rather than investing it in writing a new book.

I love seeing our titles translated into multiple foreign languages and enjoyed by children around the world. While all our books are of high quality, not all of them will work in all countries. Here are a few interesting things I’ve learned about selling our titles at international book fairs:

- Science and math titles always get the attention of both rights agents and distributors, especially in the East-Asian market. All I need to do is flip to the catalog page featuring a new Robert E. Wells title and a contract will be signed within months.

What's So Special about Planet Earth? in Spanish

- American holiday titles and illustration styles considered “American”, however, generally do not stir interest overseas.

- Surprisingly, we have some interest in the Korean market for African American titles for they see those as encouraging, obstacle-overcoming lessons.

- Speaking of Korean market, our title of Princess K.I.M. and the Lie That Grew put a smile on all the Korean publishers’ faces when it was first presented. Why? Because Kim is a very popular surname in Korea!

Princess K.I.M. and the Lie That Grew in Korean

- New born babies and titles on siblings are generally not too popular in China due to the One Child Policy.

- Muslim regions do not accept any stories with pigs in them.

- In general, illustrated animal characters sell. The cuter they are, the more sample review copies are requested.

When I Feel Sad in Chinese

- We also learned throughout the years that bunnies do particularly well in Germany.

Do you know of any other fun facts that I missed? Feel free to share them with us!

0 Comments on Selling Books Overseas as of 1/1/1900

0 Comments on Selling Books Overseas as of 1/1/1900

Last weekend I had the pleasure and privilege of sharing a stage with the wonderful Mary Hoffman at the Ilkley Festival. We were talking about the different ways in which we fictionalize Venice. And one of the questions that came up was this: ‘How do you both feel when you see yet another novel about Venice hitting the bookshop shelves?’

Neither of us owns Venice. We both earn our right to write about Italy novel by novel. But we did admit to a flicker of annoyance at books that cynically employ the undeniable commercial lustre of Venice to gild their lily – or to put a velvet bow on their dog.

Now I have returned from Ilkley to Italy … only to discover that Mary and I have both been thoroughly trumped in our attempts to write a properly Venetian Venice … perhaps. For a Venetian gondolier has just been and gone and published a novel.

Sad to tell, Angelo Tumino’s novel contains nothing of moonlight, romance or lapping waves. Invasione Negata takes the form of a diary of a widowed retired engineer who finds himself living in a condominium in the suburbs of Rome, surrounded by immigrants who speak other tongues, cook foreign foods – and persecute, rob and attack the native Italians.

Tumino, 36, claims that he is a gondolier only by economic necessity: his true calling is as a writer. He’s hoping that Invasione negata will lift him away from a life at the oar and into a properly literary existence in front of a computer.

Instead, the slim volume has so far propelled Tumino into controversy. The author claims that the book’s intention is to document the most profound fear that strikes the rich nations of the west – fear of the foreigner. He claims that the politicians are incapable of solving the problems and it is the ordinary citizens who pay the costs of clandestine immigration.

‘I would say it is a tale of metropolitan conflict,’ says Tumino.

What he not saying – according to La Nuova newspaper – is if he’s a member of the right-wing anti-immigration Lega Nord. But that’s not stopping others from labelling him that, and worse. The Indymedia Lombardia website has written a profile of Tumino entitled ‘The Nazi Gondolier’. And describes his work as ‘di chiaro stampo hitleriano’ – ‘of a clearly Hitlerian stamp’. But the site has been much criticized for the intemperance of its coverage, and in other places the novel and its writer have been highly praised.

Tumino protests that the character depicted in the novel is not a self portrait. He adds ‘Reading Stephen King, one might think that this is an author with psychological problems. But in fact he is a totally normal person.’

Invasione negata had its official launch at the fish market in Venice on October 12th, as its author (still) serves on the traghetto between Santa Sofia and Rialto.

In Tumino's top drawer are two other books – a collection of comic short stories – The Gondolier without a Gondola and American Gondolier, a science fiction story set in a Venice that has been bought up by the Americans and is inhabited by android gondoliers.

So is it still safe for Mary and me to go in the water, with our historical novels about Venice and Italy?

LINKS

Mary Hoffman’s Stravaganza series starts in Venice, with

City of Masks. The latest book in the series,

City of Ships, is just published by Bloomsbury

Rosemarie Garland-Thomson is Associate Professor in the Department of Women’s Studies at Emory University. She was recently named one of 2009’s “50 Visionaries Who Are Changing  Your World” by UTNE Reader. Her most recent book, Staring: How We Look captures the stimulating combination of symbolic, material and emotional factors that make staring so irresistible while endeavoring to shift the usual response to staring, shame, into an engaged self-consideration. In the original post below she looks at end-of-life issues and the health care debate.

Your World” by UTNE Reader. Her most recent book, Staring: How We Look captures the stimulating combination of symbolic, material and emotional factors that make staring so irresistible while endeavoring to shift the usual response to staring, shame, into an engaged self-consideration. In the original post below she looks at end-of-life issues and the health care debate.

Democracy thrives on polarized debates, theatrical performances that try to convince citizens about how to spend their dollars and place their votes. Statements get especially extravagant when we are discussing important policy issues that affect such sensitive personal issues as how we take care of each other when we are sick, vulnerable, hurt, or dying. Our recent debate about health care has flared especially intensely about end-of-life and life ending issues. That the inevitable outcome of life is death is a hard pill for us all to swallow. Health maintenance is a more comfortable and cheerful topic for us ever optimistic Americans than the uncompromising truth of our impending mortality.

One of the more vivid concepts to emerge from the health care debate is the provocative concept of pulling the plug on granny. The image of our granny shorn from life-sustaining sustenance, care, and support cuts both ways, calling up tender sympathy in some and tough pragmatism in others. A forlorn granny is code for the larger issue of how to make difficult decisions about not just distributing resources but who we think deserves those resources. In other words, the figure of granny lets us consider who we think of as deserving and valued fellow citizens, of who we want to be in our human community.

One way we frame this is through a cost-benefit analysis about what we imagine to be high or low quality of life. One reason we might pull the plug on granny is that the quality of her life seems low to those of us who are not old sick, or disabled. Moreover, we understand Granny to be using up more resources than she is contributing to society. People on both sides of the healthcare debate have brought forward the most extravagant example from history of where evaluating the quality of other people’s lives can lead. Between 1939 and 1942, the Nazi regime undertook an official euthanasia program. More recently questions of life quality and resource distribution sprang forward with the revelation that a number of grannies and other significantly disabled people at a hospital in New Orleans might have been euthanized during the Katrina disaster. These troubling occurrences, one then and the other now, remind us of the continuing communal struggle to decide what the Democratic premise of equality among citizens might actually mean.

The contemporary British version of our American granny is the physicist Stephen Hawking, whose imagined low quality of life based on his significant disability starkly contrasts with the value of his contribution as a brilliant scientist. Hawking is an exception, of course, to the usual way we consider the grannies of the world. Those who offered up Hawking has an example of a person whose plug might be pulled by a reformed healthcare system were surprised when Hawking claimed that the British healthcare system have provided him with the plugs he needed for a quality life through which he made his important contributions.

The late Harriet McBryde Johnson, who was a civil rights attorney and advocate for disability rights, made public a discussion about plug pulling with the Princeton ethicist Peter Singer, who has advocated euthanizing disabled newborns as a form of moral pragmatism when parents get a child they would prefer not to have. Johnson, who like Hawking lives with significant disabilities, put herself forward in the pages of the New York Times Magazine in 2003 to present the public with the story of how someone we imagine us having a very low quality of life in fact has a very high quality of life. In doing so, she offered us an opportunity to think through how we distribute resources and what a valuable life might be.

People like Stephen Hawking and Harriet McBryde Johnson–as well as our frail grannies, Katrina victims, and disabled German citizens under fascism– remind us that the conversation about who should and should not be in the world– to use Hannah Arendt’s phrase– is an urgent and confusing one today.

Today’s healthcare debate and it’s polarizing icons points to a less dramatic and often unnoticed contradiction between two opposing currents in American culture today. On the one hand is the endeavor to integrate people with disabilities into the public world by creating an accessible, barrier free material environment. On the other hand, is the medical mission to eliminate people with disabilities from the human community. What we might call the “integration initiative” arises from a rights-based understanding of disability and occurs through legislative and policy mandates such as the Americans with Disabilities Act of 1990 and 2009. In contrast, the “elimination initiative” arises from the idea that social improvement requires elimination of devalued human qualities and cons of people in the interest of reducing human suffering and increasing life quality and building a more desirable citizenry.

This contradiction in beliefs has filled the contemporary American public landscape with both fewer and more people with disabilities. For instance, wheelchair users now enter public spaces, transportation, employment, and commercial culture on a scale impossible before the legal mandates of the 1970s began to change the built environment. At the same time, medical technologies increasingly identify and eliminate through selective reproductive procedures potential wheelchair users born with traits such as spina bifida, which often requires wheelchair use for effective mobility. In another example, people with developmental and cognitive disabilities are now educated in integrated, mainstream educational settings which accommodate their educational needs rather than in segregated institutions. Simultaneously, medical technology routinely selects fetuses with Down syndrome or trisomy 21 in pregnancies to evaluate for termination.

The point is that not just what we do with granny’s plugs but how we imagine granny’s life reaches out beyond the nursing home room and into our shared world, affecting who we are and want to be as a human community.

I was told recently by a published writer that an unsolicited submission literally belongs to the publisher until they tell me they're either buying or rejecting it.

This is madness! Are you kidding me? See this stuff drives me crazy! Who gives this kind of crackpot advice? See, this is why agents started blogs in the first place and why we so often have to repeat ourselves over and over again (not that I’ve ever had to address this question before). My goal, with this whole blog, is wrapped up in this one question. I am hoping to help eliminate this kind of wrong, wrong, wrong advice to poor unassuming authors. Thank goodness you’re heading in the right direction by finding agent blogs.

If you haven’t figured it out yet this so-called published writer is very wrong. An unsolicited, or a solicited submission for that matter, belongs to you. Sure the publisher can hold on to it for as long as they want, agents can do the same thing, but that doesn’t mean they have any control over it. You can sell it somewhere else, pull it from submission, or just plain let it sit there for years if you want. However, no one owns that work but you.

Jessica

By: Rebecca,

on 6/22/2009

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

gay,

Politics,

Current Events,

American History,

A-Featured,

Bush,

Barack Obama,

Obama,

Clinton,

rights,

Barack,

Bill Clinton,

leadership,

George,

Bill,

gay rights,

George Bush,

DOMA,

Add a tag

Elvin Lim is Assistant Professor of Government at Wesleyan University and author of The Anti-intellectual Presidency, which draws on interviews with more than 40 presidential speechwriters to investigate this relentless qualitative decline, over the course of 200 years, in our presidents’ ability to communicate with the public. He also blogs at www.elvinlim.com. In the article below he reflects on Presidents Obama and Bush. See his previous OUPblogs here.

than 40 presidential speechwriters to investigate this relentless qualitative decline, over the course of 200 years, in our presidents’ ability to communicate with the public. He also blogs at www.elvinlim.com. In the article below he reflects on Presidents Obama and Bush. See his previous OUPblogs here.

Presidents array themselves along a continuum with two extremes: either they are crusaders for their cause or merely defenders of the faith. Either they attempt to transform the landscape of America politics, or they attempt to modify it in incremental steps. To cite the titles of the autobiographies of the current and last presidents: either presidents declare the “audacity of hope” or they affirm a “charge to keep.” If President Obama is the liberal crusader, President George Bush was the conservative defender.

The strategies of presidential leadership differ for the crusader and the defender, but President Obama appears to be misreading the nature of his mandate. Conciliation works for the defender; it can be ruinous to the would-be crusader.

The crusader must have his base with him, all fired up and ready to go. For to go to places unseen, the crusader must have the visionaries, even the crazy ones, on his side. The defender, conversely, must pay homage to partisans on the other side of the aisle because incremental change requires assistance from people, including political rivals, invested in the status quo. Moderate politics require moderate friends.

The irony is that President George Bush, a self-proclaimed defender - spent too much time pandering to his right-wing base, and Barack Obama - a self-proclaimed crusader, is spending a lot of time appeasing his political rivals. Their political strategies were out of sync, and perhaps even inconsistent with their political goals.

Take the issue of gay rights for President Obama. The President is trying so hard to prove to his socially conservative political rivals that he is no liberal wacko that he has reversed his previous support for a full repeal of The Defense of Marriage Act (DOMA). What he may not have realized is that it may be politically efficacious for a defender to ignore his base, but the costs to the crusader for alienating his base are far graver. Bipartisanship is not symmetrically rewarding in all leadership contexts.

Consider the example of President Bill Clinton, a “third-way” Democrat. He ended welfare as we knew it, and on affirmative action he said “mend it, don’t end it.” Much to Labor’s chagrin, he even passed NAFTA. Bill Clinton was no crusader. And if the Democratic base wanted a deal-making, favor-swapping politico, they would have nominated a second Clinton last year.

The crusader rides on a cloud of ideological purity. Without the zealotry and idolatry of the base, the crusader is nothing; his magic extinguished. And this is happening right now to Barack Obama.

The people who gave the man his luster are also uniquely enpowered to take it away. (It is a mistake to think that Sean Hannity or Michael Steele have this power.) Obama campaigned on changing the world, and his base can and will crush him for failing to deliver on his audacity. The Justice Department’s clumsy defense of DOMA via the case law recourse of incest and pedophilia may be a small matter in the administration’s scheme of things, but it is a big and repugnant deal to the base - the people who matter for a crusading president.

This is a pattern in the Obama administration: for the promise to pull troops out of Iraq there was the concomitant promise of more in Afghanistan, for the release of the OLC “torture memos,” operatives of harsh interrogation techniques were also offered immunity, in return for the administration’s defense of DOMA, Obama promised to extend benefits to same-sex partners of federal employees. This is incremental, transactional, and defensive leadership. Defenders balance; but crusaders are mandated to press on. Incremental leadership works for presidents mandated to keep a charge, but not for one who flaunted his audacity. There are distinct and higher expectations for a crusader-to-be; and if President Obama is to live up to his hype, then bear the crusader’s cross he must.

By: Rebecca,

on 6/8/2009

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Health,

Animal,

Science,

Animals,

A-Featured,

Medical Mondays,

Odyssey,

rights,

PETA,

welfare,

Animal rights,

Morrison,

animal welfare,

with,

Adrian Morrison,

An Odyssey with Animals,

Adrian,

Add a tag

Adrian Morrison, DVM, PhD. is professor emeritus of Behavioral Neuroscience at the University of Pennsylvania’s School of Veterinary Medicine. In his new book An Odyssey With Animals he explores the touchy balance between animal rights and animal welfare. In the original post below he discusses the various shades of gray in this debate.

Throughout history, humanity has associated with animals in ways that have benefited human beings. Animals have been hunted for food and clothing, accepted at our hearths for companionship, and brought into our fields to produce and provide food. Only during the latter two-thirds of the last century could most people – in the developed, wealthy West — begin to imagine living without animals as part of our daily lives.  We were completely dependent on them. As the twentieth century progressed, though, technological advances rendered animals’ visible presence in our lives unnecessary. We can eat a steak without coming close to a living cow, or wear a wool sweater without having to shear any sheep. But now, according to some, we have no need, indeed no right, to interfere in animals’ lives, even to the extent of abandoning their use in life-saving medical research. This belief motivates the animal rights/liberation movement, which follows the thinking of a small group of vocal philosophers.

We were completely dependent on them. As the twentieth century progressed, though, technological advances rendered animals’ visible presence in our lives unnecessary. We can eat a steak without coming close to a living cow, or wear a wool sweater without having to shear any sheep. But now, according to some, we have no need, indeed no right, to interfere in animals’ lives, even to the extent of abandoning their use in life-saving medical research. This belief motivates the animal rights/liberation movement, which follows the thinking of a small group of vocal philosophers.

But what does the term “animal rights” mean in a practical way to most in our society? All of us do use the word “rights” quite commonly: the right to decent, humane treatment when animals are in our charge. This is our obligation as humane human beings. Indeed, this duty is embodied in law, and we can be prosecuted and punished if we ignore it as lawyer/ethicist Jerry Tannenbaum from the University of California-Davis pointed out to me years ago when I was focused on the depredations of the “animal rights movement” against biomedical researchers and blinded to the obvious. Thus, the ongoing debate – and recent violence in some California universities for example – is about a more radical (and unworkable view) of rights. To clarify things in my own mind, I have come up with a ranking of views/behavior from the extreme to the reasonable as I see it.

First, there are those within the animal rights and welfare movement who believe that human life is worth no more than that of other animals. Some of these people damage property, threaten the lives of those who use animals, and even attempt to commit assault or murder in their effort to save animals. This subsection of the animal rights movement has been classified by the FBI as “one of today’s most serious domestic terrorism threats.” They are extremists in the truest sense.

Others in the movement, such as those who condemn the fur industry, engage in stunts like parading naked with signs. Though extreme, these tactics do not, to my mind, constitute extremism—just activism. Unfortunately, there are others who damage stores, throw paint on fur coats, and release mink from farms to die in the wild. They would obviously fit into the first category: extremists.

Then there are those who gather in peaceful (and lawful) protest, or who contribute money to organizations engaged in some of the activities just described, often because they have been fooled by false claims of animal abuse or graphic photographs that have been doctored or taken out of context. Of course, overlaps among these groups are possible, if not likely. I would consider these members of the movement—those who object to animal use but who do not employ extreme measures themselves—to be animal rights and welfare activists (as opposed to extremists).

Then, there are those who use animals but are also involved in efforts to improve the treatment of them. These individuals comprise what I consider to be the animal welfare movement—whether they engage actively through contributions to local humane societies or other good works or simply share the beliefs of those who do. Certainly, I am a member of this group. We think animals have certain claims on us humans when they are under our control, including the right to decent care. Put another way, we believe that, as humans, we have a moral responsibility to treat animals as well as is practically possible.

This position is distinct from the aims of the animal rights/liberation movement, and here I think it is important again, to acknowledge the difference between “animal rights” as envisioned by the movement and “animal welfare.” Those who belong to the animal rights/liberation group believe in severely limiting the way humans use animals, encouraging our removal from the animal world in many ways. Those who belong to the animal welfare group wish to improve animal health and welfare in a number of different contexts.

There are those I place in an extreme animal welfare camp that I consider less than reasonable, though: they object to the idea that many species are “renewable resources” that humans may justifiably use—hunting them for food or fur is one example. They aim to change drastically the way we use animals. On the other hand, I think that animals are a renewable resource and that ensuring animals’ welfare while they are alive, and providing a humane death for a legitimate purpose, is our only charge.

Finally, it is my perception that over the years there has been a noticeable shift toward use of an umbrella term, animal protectionism. I do not favor this designation because, though a noble-sounding banner, it could easily cloak an extremist fringe.

Now, you can decide where you fit in this spectrum.

VBT – Writers on the Move has a new feature to its marketing campaign – Viewpoint.

Today begins this new feature and Carolyn Howard-Johnson is launching the new segment at her site: www.sharingwithwriters.blogspot.com

The title of Carolyn’s article is:

Amazon, Reviews, Free Speech and More. C'mon, Let's Rant!

What’s happening is Amazon has decided to restrict the use of promotion on their site. Authors have always posted reviews of other authors' books on Amazon and included a link back to their own Amazon book page as part of their usual signature. Amazon, in their questionable wisdom, has disallowed this practice. When signing your review, you can only put your name – nothing else.

Does Amazon have a right to do this? Is this a fair practice?

I personally don’t understand Amazon’s motives for this attack on authors. If there are Amazon customers who feel Amazon shouldn’t be a promotional venue for authors they don't have to make use of the links given by authors.

If one were thinking clearly, it benefits everyone if authors/reviewers include links back to their own selling page:

1. The Amazon customer reading that review may feel confident that the reviewer, as an author, may know what he/she is talking about. This may encourage a purchase.

2. Amazon sells that book.

3. The author/reviewer may get someone to follow his link back to his selling page and make a sale.

4. Amazon sells that book.

It seems to be a win-win situation.

To read the original article by John Kremer and then Carolyn’s response go to Sharing with Writers right now. Let us know what you think about this!

Karen

By: Rebecca,

on 4/14/2009

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

Law,

facebook,

myspace,

privacy,

A-Featured,

A-Editor's Picks,

Media,

cyberspace,

rights,

responsible,

Jon Mills,

Add a tag

Jon Mills is a professor and dean emeritus in the Fredric G. Levin College of Law. Among his many roles, he served as former speaker of the Florida House of Representatives and as the founding director of UF’s internationally recognized Center for Governmental Responsibility. He is author of many books, including his latest,  Privacy: The Lost Right.

Privacy: The Lost Right.

When you find yourself on a dark street in a dangerous area of your city, you probably keep a wary eye out for trouble. Conversely, when you sit in front of your computer screen with a cup of coffee in your home or office, you probably feel completely safe and secure. But wait. We are learning that cyberspace, like any community, has its own mean streets and they aren’t always clearly marked.

Cyberspace — whatever that is — has its own predators, spies, abusers and liars. Like the real world, the online world includes bad people and shady deals. We have recently learned that our government was probably illegally spying on many of us, despite its enormous power to spy on us legally. But as long as you trust the government at all levels, you should have no worries. And, what about all the information we gladly place on the internet about ourselves.

Let’s start with the government. Spying is a well-established function of government and has been for thousands of years. Sometimes it involves finding terrorists or criminals — we like it when that happens. But, there are other times when governmental power has been abused at the expense of its citizens. Remember Richard Nixon’s enemies lists that targeted journalists? How about McCarthyism when professors, actors and others were spied on and politically persecuted? We don’t like it when government bullies its citizens.

It’s interesting to note that government is much better equipped to spy today and has been given more authority to do it under policies such as the PATRIOT Act. Over the past eight or so years, under the very real threat of terrorism, Congress has authorized unprecedented intrusions into the privacy of American citizens, including warrantless searches, secret courts and immunity to companies that provide our confidential information to the government.

Technology has made privacy intrusions much easier to accomplish and more difficult to detect. The lists that required so much time to develop in the Nixon and McCarthy eras are now compiled by a good search engine quickly and without notice. Who subscribes to socialist magazines? Who contributed to liberal causes? Who attends meetings of the ACLU? This information is instantly available. Today’s spies are software geeks, not guys in dark shades.

Beyond government spies, some of the greatest privacy violations are facilitated by voluntary disclosures. The recent controversy about Facebook’s treatment of information as theirs is important, but the information willingly shared with others has a substantial potential for damage as well. In a Facebook environment when an individual shares information, even with a limited group, what expectation of privacy is there really? What if that shared information is forwarded to others? Realistically, once information is shared on the Internet, it’s no longer private, like it or not. Your information, once you put it out there, may be forwarded to others who may not be as discreet with it as you would want. When a prospective employer slides a MySpace or Facebook picture across the desk to you, you may not have known it was available or that it had even been taken. In addition to shared information getting away from the user, many Facebook users don’t set their profiles to private, leaving them open to viewing by anyone, friend or foe. And, there are websites devoted to digging up information from social websites. Spokeo.com says it “will find every little thing your friend (or enemy, as the case may be) has said, done and posted on the internet. Nothing is secret…”. We are also subject to instant searches of all public information related to each of us. Zabasearch is committed to making that information available. Zaba CEO Nick Matzorkis says public information online is “a 21st century reality with or without ZabaSearch.” The amount of individual information publicly available is staggering.

We need to be aware of that reality and not think of cyberspace as a pure and wonderful new world. Because when we’re online, we’re wandering a neighborhood that has predators, spies, abusers and liars We need to keep our eyes open for trouble, even when we’re having coffee in our living room while surfing the net.

By: Rebecca,

on 11/4/2008

Blog:

OUPblog

(

Login to Add to MyJacketFlap)

JacketFlap tags:

President Obama,

of,

Rights,

States,

United,

History,

Biography,

Politics,

Current Events,

American History,

President,

A-Featured,

A-Editor's Picks,

Media,

civil rights,

Barack Obama,

America,

Obama,

African American Studies,

Barack,

Civil,

Add a tag

In the article below, written several weeks ago before Obama was President-elect, scholar Steven Niven, Executive Editor of the African American National Biography and the forthcoming Dictionary of African Biography, examined the historic candidacy of Barack Obama within the context of the civil rights movement and the changing nature of black politics. This article originally appeared on The Oxford African American Studies Center.

Barack Obama Jr., the first African American presidential nominee of a major political party, was born in Honolulu, Hawaii, on August 4, 1961. His birth coincided with a crucial turning point in the history of American race relations, although like many turning points it did not seem so at the time. Few observers believed that Jim Crow was in its death throes. Seven years after the Supreme Court’s Brown ruling, less than 1 percent of southern black students attended integrated schools. Southern colleges had witnessed token integration at best. In early 1961 Charlayne Hunter-Gault and Hamilton Holmes integrated the University of Georgia, but James Meredith’s application to enter Ole Miss that same year was met by Mississippi authorities with a “carefully calculated campaign of delay, harassment, and masterly inactivity,” in the words of federal judge John Minor Wisdom. Despite the Civil Rights Acts of 1957 and 1960 and promises from the new administration of President John F. Kennedy, the voting rights of African Americans remained virtually nonexistent in large swathes of Mississippi, Alabama, and Louisiana.

However, the Freedom Rides that began in the summer of 1961 and the voting rights campaign that Robert P. Moses initiated in McComb County, Mississippi, in the very week of Obama’s birth, signaled a hardening of African American resistance. There was among a cadre of activists a new determination to confront both segregation and the extreme caution of the Kennedy administration on civil rights. Later that fall, Bob Moses wrote a note from the freezing drunk-tank in Magnolia, Mississippi, where he and eleven others were being held for attempting to register black voters. “This is Mississippi, the middle of the iceberg. This is a tremor in the middle of the iceberg from a stone that the builders rejected.”

Over the next three years, Moses, Stokely Carmichael, James Farmer, James Forman, John Lewis, Diane Nash, Marion Barry, James Bevel, Bob Zellner, and thousands of activists devoted their lives to shattering that iceberg. Some, including Jimmy Lee Jackson, James Cheney, Andrew Goodman, and Michael Schwerner gave their lives in that cause. They took the civil rights struggle to the heart of the segregationist South: to McComb, Jackson, and Philadelphia, Mississippi, to Albany, Georgia, and to Birmingham, Alabama. By filling county jails and prison farms, by facing fire hoses, truncheons, and worse, they ultimately made segregation and disfranchisement untenable, paving the way for the 1964 Civil Rights Act and the 1965 Voting Rights Act.

Obama’s childhood experience of the dramatic changes wrought by the 1960s, seen from the vantage point of Hawaii and Indonesia, necessarily differed from most African American contemporaries in rural Mississippi or urban Detroit. But it would be a mistake to argue that he was untouched by those developments. His black Kenyan father, Barack Obama Sr. met his white Kansan mother, Ann Dunham, at the University of Hawaii, where the older Obama had gone to study on a program founded by his fellow Luo, Tom Mboya. Mboya’s program received financial support from civil rights stalwarts, including Jackie Robinson, Harry Belafonte, and Sidney Poitier. After Obama Sr. left his wife and child behind in 1963, Ann Dunham became the dominant figure in young Barry Obama’s formative years, and Obama has argued that the values his mother taught greatly shaped his worldview. Those values were largely secular, but grounded in the church-based interracial idealism of the early 1960s civil rights movement—the beloved community inspired by Martin Luther King Jr.’s rhetoric, Fannie Lou Hamer’s heroic activism, and Mahalia Jackson’s gospel singing.

After returning from Indonesia in 1971 to live with his white grandparents and go to high school in Hawaii, Obama’s formal education was abetted by his friendship with “Frank,” an African American drinking buddy of his grandfather, who tutored the young Obama in the history of black progressive struggles. The scholar Gerald Horne has speculated that Frank may have been Frank Marshall Davis, a pioneering radical journalist in the 1930s whose jazz criticism and poetry was influential in the Black Arts Movement of the 1960s. Davis, a Kansas native, moved to Hawaii in the late 1940s.

Obama’s work as an anti-poverty activist in Chicago in the 1980s likewise built on the legacy of Arthur Brazier and other 1960s community organizers influenced by Saul Alinsky. Arriving in Chicago in the era of Harold Washington also helped school Obama in the ways of Chicago politics. As the director of a major “get-out-the vote” drive in Illinois in the 1992 elections, he helped elect both Bill Clinton to the presidency and Carol Moseley Braun to the U.S. Senate. Connections through his wife, Michelle Robinson Obama, who lived in Chicago’s working-class black Southside, a schoolfriend of Santita Jackson (daughter of Jesse Jackson), and an aide to Mayor Richard Daley certainly helped Obama win friends and influence the right people in Chicago’s Democratic Party. The luster of his fame as the first African American president of the prestigious Harvard Law Review, as well as his self-evident political and rhetorical skills undoubtedly marked Obama out from the general pack of political hopefuls. In 1996 he easily won a seat in the Illinois Senate, representing a district that encompassed the worlds of both “Obama the University of Chicago Law Professor”—liberal, wealthy, and cosmopolitan Hyde Park—and “Obama the community organizer”—the district’s poorer neighborhoods which housed the headquarters of Operation Breadbasket.

Obama’s achievements in the Illinois legislature were solid, though not spectacular. His cool demeanor, cerebral approach, and links to Hyde Park liberalism irked established black leaders in Springfield, veterans of the civil rights struggles of the 1960s and 1970s, who viewed Obama as a Johnny-Come-Lately who had not paid his dues. The charge that he was somehow “not black enough” came to the fore in his unsuccessful primary challenge for the U.S. congressional seat of the former Black Panther, Bobby Rush, in 2000. Although Obama secured a majority of white primary voters, Rush won the vast majority of black voters and defeated Obama by a margin of 2 to 1, successfully depicting him as a Harvard-educated, Hyde Park elitist at odds with the more prosaic values of the mainly black working-class district.

Despite that setback, Obama stunned political observers four years later by winning the 2004 Democratic primary for U.S. Senate in Illinois, and then crushing his (admittedly very weak) Republican opponent in the general election, Alan Keyes. Keyes—a black, ultraconservative, fundamentalist pro-lifer who had been a minor diplomat in the Ronald Reagan administration, had few direct links to Illinois—was placed on the ballot after the primaries because the Republican primary winner had dropped out following a sex scandal. Obama also benefited from a well-received keynote address to the 2004 Democratic National Convention (DNC) in Boston. It was at the DNC that most Americans first heard and saw the self-described “skinny kid with a funny name,” who urged his fellow citizens to look beyond the fierce partisanship that had characterized politics since the 1990s.

“The pundits like to slice and dice our country into Red [Republican] States and Blue [Democratic] States,” he told the watching millions.

But I’ve got news for them, too. We worship an awesome God in the Blue States, and we don’t like federal agents poking around in our libraries in the Red States. We coach Little League in the Blue States and yes, we’ve got some gay friends in the Red States. . . . We are one people, all of us pledging allegiance to the Stars and Stripes, all of us defending the United States of America.

Obama’s arrival in the Senate in January 2005 provoked significant media interest, verging on what some have called Obama-mania. He was, after all, only the fifth African American to serve in that body in 215 years, following Hiram Rhodes Revels, Blanche Bruce, Edward Brooke, and Moseley-Braun. But media scrutiny and the popular interest in the new candidate went far beyond the attention given to Moseley-Braun in 1992. In part, this was because Obama’s election symbolized a broader generational shift in African American politics. Black political gains in the 1970s, 1980s, and 1990s, were largely achieved by a generation of politicians who came of age in the southern civil right movement, like John Lewis, Eva Clayton, Vernon Jordan, Andrew Young, or in urban Democratic politics, such as Charles Rangel in New York and Willie Brown in San Francisco. Obama was not the only Ivy League–educated black politician to emerge in the early 2000s. In 2002, Artur Davis, like Obama, a Harvard Law School graduate won election to the House of Representatives from Alabama; in 2005, Deval Patrick, another Harvard Law graduate, became only the second African American elected governor of a state (Massachusetts) since Reconstruction; and in 2006 Cory Booker (Yale Law School and Queens College Oxford) and Michael Nutter (University of Pennsylvania) were elected mayors of Newark and Philadelphia, respectively. Harold Ford Jr., a Penn grad and Tennessee congressman narrowly lost a U.S. Senate race in Tennessee the same year. Patrick and Nutter are a few years older than Obama, while Davis (b. 1967), Booker (b. 1969), and Ford (b. 1970) are slightly younger. In terms of ideology, there are also similarities in these politicians’ commitment to post-partisanship, although Ford, now leader of the Democratic Leadership Council, and Davis, have been more willing to adopt socially, as well as economically conservative positions, so as to broaden their appeal as possible statewide candidates in the South.

But perhaps the most remarkable facet of Obama-mania is the rapidity with which the freshman Senator was discussed as a possible presidential candidate in 2012 or 2016. Or rather that would have been the most remarkable facet, had Obama not sought and then won the Democratic nomination for president in 2008! It is hard to think of a comparable American politician whose rise has been so swift, dramatic, or unforeseen, except maybe that other, most famous Illinois politician, Abraham Lincoln.

Whether Obama follows Lincoln as the second U.S. president from Illinois is unknown at this time of writing, five weeks from Election Day, 2008. At the very least, Obama’s candidacy marks another tremor in the iceberg that Bob Moses faced in that Magnolia drunk-tank in the fall of 1961, and that James Meredith faced down while integrating Old Miss in the face of a full-force white riot a year later. It is, then, all too fitting—and a reasonable marker of American progress in race relations—that forty-six years later Barack Obama became the first African American to participate in a presidential debate, not just in Mississippi, but at Ole Miss, itself, the hallowed symbol of segregationist resistance.

If you are in or near the Pittsburgh area and would like to share ideas with a group of interesting socially responsible librarians, consider going to the Progressive Library Skillshare. It’s my birthday weekend, so I’ll be someplace else most likely but it would be on my todo list otherwise.

events,

pittsburgh,

proglib,

skillshare

Your World

Your World

Hi Jessamyn. I’m so glad you’re a broken record on this issue. I am too, and still feel that the message still hasn’t been repeated often enough.

For my longest piece on this topic, see “Open access for digitization projects,” first published in my newsletter for July 2, 2009 [ http://goo.gl/NPQF ], and reprinted with some revisions in Karl Grandin (ed.), _Going Digital: Evolutionary and Revolutionary Aspects of Digitization_, Nobel Foundation, Royal Swedish Academy of Sciences, April 2011 [ http://goo.gl/bVnSl ].

I’m currently co-convening the National and State Libraries of Australasia (NSLA) Copyright Working Group and read this article with great interest. NSLA has made a commitment to ensure that “public domain works are, to the greatest extent possible, accessible and available for unrestricted re-use by the public.” At the moment we are considering how to make this an easy process, so we are looking at options to: standardise the way we identify our public domain collections in a way that is easy to understand and eliminates complex registration or permission barriers while still maintaining appropriate acknowledgments (for creators and library collections). Here’s the NSLA Position Statement on Public Domain – http://www.nsla.org.au/sites/default/files/publications/NSLA.Public_Domain_Statement.pdf Janice van de Velde