Coming soon to a screen near you: animation from Ghana.

The post Meet the Passionate Artists Who Are Building An Animation Industry in Ghana appeared first on Cartoon Brew.

Add a Comment

Coming soon to a screen near you: animation from Ghana.

The post Meet the Passionate Artists Who Are Building An Animation Industry in Ghana appeared first on Cartoon Brew.

Add a Comment

A quick scan of issues of the most highly-ranked African studies journals published within the past year will reveal only a handful of articles published by Africa-based authors. The results would not be any better in other fields of study. This under representation of scholars from the continent has led to calls for changes in African universities, with a focus on capacity building. The barriers to research and publication in most public universities in Africa are many.

The post Engendering debate and collaboration in African universities appeared first on OUPblog.

Poverty can be defined by 'the condition of having little or no wealth or few material possessions; indigence, destitution' and is a growing area within development studies. In time for The Development Studies Association annual conference taking place in Oxford this year in September, we have put together this reading list of key books on poverty, including a variety of online and journal resources on topics ranging from poverty reduction and inequality, to economic development and policy.

The post Poverty: a reading list appeared first on OUPblog.

In an effort to address misconceptions about gender and location in relation to academic publishing in Africa, the editors of African Affairs reached out to Ryan C. Briggs and Scott Weathers to discuss the findings from their recent research in more detail.

The post Gender and location in African politics scholarship: Q&A with Ryan C. Briggs and Scott Weathers appeared first on OUPblog.

frican Studies focuses on the rich culture, history and society of the continent, however the growing economies of African countries have become an increasingly significant topic in Economic literature. This month, The Centre for the Study of African Economies annual conference is taking place in Oxford. To raise further awareness of the growing importance of the study of African economics, we have created this reading list of books, journals and online resources that explore the varied areas of Africa and its economy.

The post African studies: a reading list appeared first on OUPblog.

Over two years ago I wrote that “new viruses are constantly being discovered... Then something comes out of the woodwork like SARS which causes widespread panic”. Zika virus infection bids fair to repeat the torment. On 28 January 2016 the BBC reported that the World Health Organization had set up a Zika “emergency team” as a result of the current explosive pandemic.

The post Another unpleasant infection: Zika virus appeared first on OUPblog.

After receiving nearly 1,400 submissions from across Africa, South African studio Triggerfish chose 8 projects for further development.

The post Triggerfish’s Story Lab Announces 8 African Winners appeared first on Cartoon Brew.

Add a Comment

Today is Human Rights Day. It commemorates the day in 1948 when the United Nations General Assembly adopted the Universal Declaration of Human Rights. The Universal Declaration of Human Rights lists basic rights and freedoms that every person should get, regardless of race, religion, sexual orientation, or gender.

Books are a great way for readers to learn about history and culture, and develop empathy for other people.

Books are a great way for readers to learn about history and culture, and develop empathy for other people.

Our Human Rights collection explores the issues of human rights around the world and in the United States, and the great leaders who have fought to protect those rights:

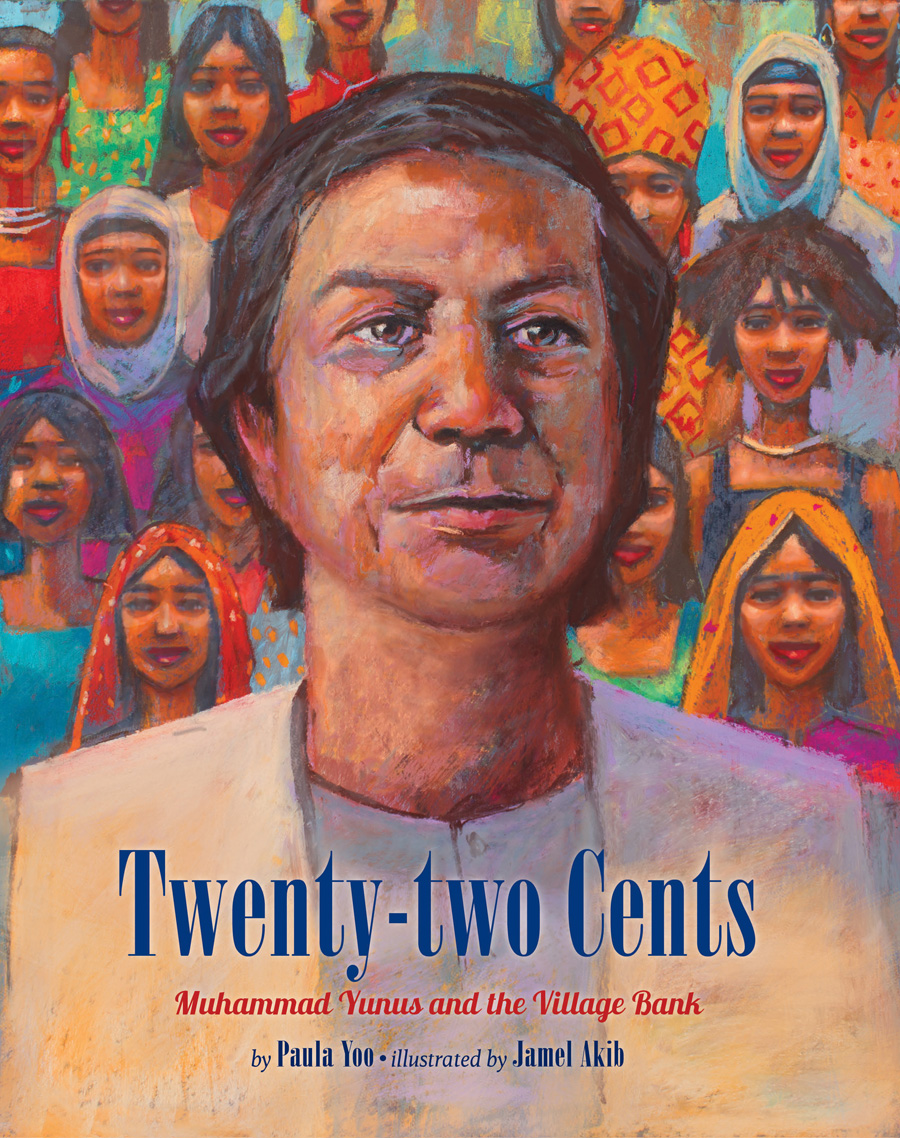

Twenty-two Cents : Growing up in Bangladesh, Muhammad Yunus witnessed extreme poverty. He later founded Grameen Bank, a bank which uses microcredit, lending small amounts of money, to help lift people out of poverty. In 2006, Dr. Yunus was awarded the Nobel Peace Prize.

: Growing up in Bangladesh, Muhammad Yunus witnessed extreme poverty. He later founded Grameen Bank, a bank which uses microcredit, lending small amounts of money, to help lift people out of poverty. In 2006, Dr. Yunus was awarded the Nobel Peace Prize.

Brothers in Hope: Thousands of boys from southern Sudan walk hundreds of miles to seek safety, from Ethiopia to Kenya. This inspiring story is based on the true events of the Lost Boys of Sudan.

When the Horses Ride By: These poems from the point of view of children during times of war let readers experience the resilience and optimism that children who go through these situations experience.

Irena’s Jars of Secrets: Irena Sendler, a social worker born to a Polish Catholic family, smuggled clothing and medicine into jewish ghettos during WWII and then started to smuggle Jewish children out of the ghettos. Hoping to reunite them with their families, Irena kept lists of children’s names in jars.

John Lewis in the Lead: After high school, John Lewis joined Dr. King and other civil rights leaders to peacefully protest and fight against segregation. In 1986, John Lewis was elected to represent Georgia in Congress, where he continues to serve today.

A Place Where Sunflowers Grow: This bilingual Japanese-English picture book depicts life in a Japanese interment camp inspired by author Amy Lee-Tai’s family’s experiences during WWII. Young Mari wonders if she’ll be able to come up with anything to draw in a place where nothing beautiful grows.

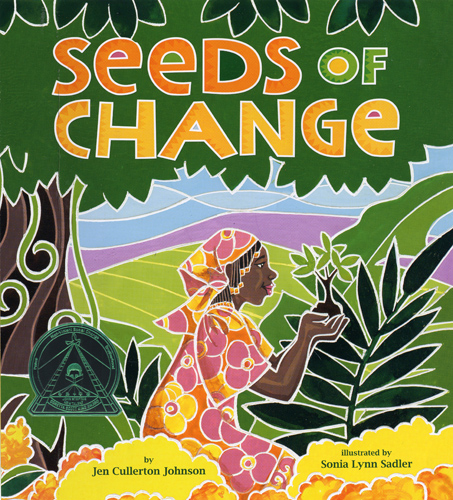

Seeds of Change: As a young girl, Wangari Maathai was taught to respect nature and people. She excelled in science and later studied abroad in the United States. When she returned home, she helped promote the rights of women and also began to plant trees to replace those that had been cut down. Wangari Maathai became the first African woman to win a Nobel Peace prize in 2004.

Etched in Clay: This biography in verse follows the life of Dave the Potter, an enslaved young man in South Carolina who engraved poems into the pots he sculpted despite the harsh anti-literacy laws of the time.

Yasmin’s Hammer: Yasmin and her family are refugees in Bangladesh. Young Yasmin works at a brick yard to help her family out, but she longs for the day when she can attend school.

A Song for Cambodia: This the inspirational true story of Arn Chorn-Pond, who was sent to a work camp by the Khmer Rouge regime in Cambodia. His heartfelt music created beauty in a time of darkness and turned tragedy into healing.

The Mangrove Tree: Dr. Gordon Sato, himself a survivor of a Japanese Internment Camp, travels to an impoverished village in Eritrea and plants mangrove trees to help the village of Harigogo become a self-sufficient community.

Want to own this book list? Purchase the whole collection here.

More resources

Is Staff Diversity Training Worth It?

Interpreting César Chávez’s Legacy with Students

7 Core Values to Celebrate During Black History Month

11 Educator Resources for Teaching Children About Latin American Immigration and Migration

The Opposite of Colorblind: Why it’s essential to talk to children about race

Protesting Injustice Then and Now

Thoughts on Ferguson and Recommended Resources

Character Day: Taking a Look at the Traits Needed to Do What’s Right

Books for Children and Educators About Kindness

Infographic: 10 Ways to Lend a Hand on #GivingTuesday

Does it matter when the first blood transfusion occurred in Africa? If we are to believe the Serial Passage Theory of HIV emergence, then sometime in the early twentieth century.

The post The first blood transfusion in Africa appeared first on OUPblog.

The 2014 Men’s World Cup finals pitted Germany against Argentina. Bets were made and various observations were cited about the teams. Who had the better defense? Would Germany and Argentina’s star players step up to meet the challenge? And, surprisingly, why did Argentina lack black players? Across the globe blogs and articles found it ironic that Germany fielded a more diverse team while Argentina with a history of slavery did not have a solitary black player.

The post An African tree produces white flowers: The disappearance of the black population in Argentina 110 years later appeared first on OUPblog.

Untamed: The Wild Life of Jane Goodall Written by Anita Silvey Foreword by Jane Goodall National Geographic Kids 6/09/2015 978-1-4263-1518-3 96 pages Age 8—12 ”At age 26, Jane Goodall was a headstrong young woman fulfilling her dream of living in an African wilderness. She spent her days exploring with the chimpanzees—animals she …![]()

A common perception is that the problem with Africa is its leaders. In 2007, Sudanese billionaire Mo Ibrahim even created a major cash prize through his charitable foundation as an incentive to African heads of state to treat their people fairly and equitably and not use their countries’ coffers for their personal enrichment.

The post There are many excellent African leaders appeared first on OUPblog.

On his recent visit to Kenya, President Obama addressed the subject of sexual liberty. At a press conference with the Kenyan President Uhuru Kenyatta, he spoke affectingly about the cause of gay rights, likening the plight of homosexuals to the anti-slavery and anti-segregation struggles in the United States.

The post Compassionate law: Are gay rights ever really a ‘non-issue’? appeared first on OUPblog.

Effective wildlife conservation is a challenge worldwide. Only a small percentage of the earth’s surface is park, reserve, or related areas designated for the protection of wild animals, marine life, and plants. Virtually all protected areas are smaller than what conservationists believe is needed to ensure species’ survival, and many of these areas suffer from a shortage of

The post Cecil the lion’s death is part of a much larger problem appeared first on OUPblog.

Just over a year ago, in March 2014, UNU-WIDER published a Report called: ‘What do we know about aid as we approach 2015?’ It notes the many successes of aid in a variety of sectors, and that in order to remain relevant and effective beyond 2015 it must learn to deal with, amongst other things, the new geography of poverty; the challenge of fragile states; and the provision of global public goods, including environmental protection.

The post The future of development – aid and beyond appeared first on OUPblog.

Since the promulgation of the revised missal, popularly known as the Novus Ordo by Pope Paul VI, with the Apostolic Constitution Missale Romanun in 1969, a growing call for either a return to the Tridentine Mass or recognition of the legitimate place of such a rite alongside the Novus Ordo has gained an international status. Groups […]

The post Insights into traditionalist Catholicism in Africa appeared first on OUPblog.

"When I went to the Iv'ry Coast, about thirty years ago, I remember coming off the plane and just being assaulted with not only the heat but the color." These were the first words of the most moving story I have ever heard—but it wasn’t the story I was there to collect. For me, the best oral histories are the ones that sound a human chord, stories that blur the spaces between historically significant narrative and personal development.

The post Uniqueness lost appeared first on OUPblog.

SOF made quite a reputation in the early years of publication for fearless, firsthand reporting from the bloody battlefields of Rhodesia. Our efforts in that ill-fated African nation and our support of the Rhodesian government in operations against communist insurgents gained us two unfortunate, undeserved labels: racists and mercenaries. We are neither. On the other hand, we have never avoided consorting with genuine mercs to insure readers get the look and feel of Third World battlefields.It's true that anti-communism was the primary ideology of SoF in the 1970s and 1980s and that they would take the side of anyone they considered anti-communist regardless of their race or nationality — they published countless articles supporting the mujahideen in Afghanistan, the Karen rebels in Burma (heroes of Rambo 4), and the contras in Nicaragua. (Ronald Reagan, he of the Iran-Contra scandal, supported white Rhodesia even longer than Henry Kissinger, causing them to have their first public disagreement. See Rick Perlstein's The Invisible Bridge pp. 671-673.) But the kind of anti-communism that supported Ian Smith's Rhodesia and apartheid South Africa was an anti-communism that supported white supremacist government.

I suppose he wanted to move someplace where everything was white and bright, so after a yearlong stint at the Nazi Party headquarters, he wound up going to Rhodesia, and he joined the Rhodesian Army. In different blogs and writings, he was always bragging, "Oh, I was a mercenary in Rhodesia and I went out and did all this fighting." But to the best of my knowledge, according to the letters he wrote to my parents, he was a file clerk. He certainly never fired a shot in anger. He started agitating over there, and the [white-led] Ian Smith government said, "We have problems enough without this nutcase," and they bounced him.The myth of the lost white land of Rhodesia has proved resilient for the paramilitary right. It plays into macho adventure fantasies as well as terror fantasies of black hordes wiping out virtuous white minorities. Rhodesia sits comfortably among the other icons of militia culture, as James William Gibson showed in his 1994 book Warrior Dreams, in which he described a visit to a Soldier of Fortune convention:

All the T-shirts had their poster equivalent, but much else was available, too. John Wayne showed up in poses ranging from his Western classics to The Sands of Iwo Jima (1949) and The Green Berets (1968). Robocop and Clint Eastwood's Dirty Harry decorated many a vendor's stall. An old Rhodesian Army recruiting poster with the invitation "Be a Man Among Men" hung alongside a "combat art" poster showing a helicopter door gunner whose wolf eyes stared out from under his helmet; heavy body armor and twin machine gun mounts hid his mortal flesh. (157-158)Anti-communism doesn't have much resonance these days, and so the support of Rhodesia or apartheid South Africa can no longer be couched in any terms other than ones of white supremacy — terms that were previously always at least in the shadows. Militarism, machismo, and white supremacy have no objection to hanging out together, and the result of their association is often deadly.

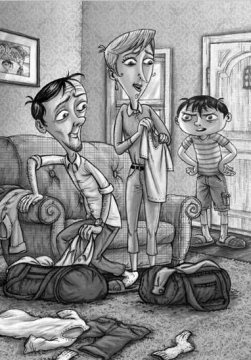

#02 Jack and the Wild Life

Series: The Berenson Schemes

Written by Lisa Doan

Illustrated by Ivica Stevanovic

Darby Creek 9/01/2014

144 pages Age 9—12

“After a wild plan by his parents left Jack stranded in the Caribbean, the Berenson family decided to lay out some rules. Jack’s mom and dad agreed they wouldn’t take so many risks. Jack agreed he’d try to live life without worrying quite so much. Then Jack’s parents thought up another get-rich-quick scheme. Now the family’s driving around Kenya. An animal attack is about to send Jack up a tree—alone, with limited supplies. As Jack attempts to outsmart a ferocious honey badger and keep away from an angry elephant, he’ll have plenty of time to wonder if the Berenson Family Decision-Making Rules did enough to keep him out of trouble.” [book jacket]

Review

The Berenson family adults are constantly trying to find an easy way to make a fortune, conjuring up one odd scheme after another. Jack is the one that pays the price for these awful plans, while his parents wander through life unaware of most everything around them, including their missing son. This makes for many comical situations and gives the series its heart. This time, the Berensons fly to Africa, Jack in tow, because, as Dad tells Jack,

“Your mum and I have invented a brand-new kind of tourism . . . a surefire moneymaking opportunity.”

They plan to build a tourist camp where people can live like a real Maasai tribe. Using mud, sticks, grass, and more mud, Jack’s parents plan to build the Maasai mud-huts tourists will gladly rent to experience tribal life (and a fence to keep out the lions). The best part of their plans, the two adults believe, is they need no money to build their attraction—Mother Nature supplies the materials. Jack is not thrilled. He finally had a “normal” life, a home, parents who held down real 9-to-5 jobs, and a new friend—Diana. Once summer began to fade into fall, Jack’s parents could no longer do that “grind.” But this time things will be different: Jack’s parents will plan ahead, not take any risks, and not lose Jack. Changing their ways proves more difficult than the parents thought, as things do not go as planned, risks are taken, and, well, Jack . . . he ends up in a tree.

They plan to build a tourist camp where people can live like a real Maasai tribe. Using mud, sticks, grass, and more mud, Jack’s parents plan to build the Maasai mud-huts tourists will gladly rent to experience tribal life (and a fence to keep out the lions). The best part of their plans, the two adults believe, is they need no money to build their attraction—Mother Nature supplies the materials. Jack is not thrilled. He finally had a “normal” life, a home, parents who held down real 9-to-5 jobs, and a new friend—Diana. Once summer began to fade into fall, Jack’s parents could no longer do that “grind.” But this time things will be different: Jack’s parents will plan ahead, not take any risks, and not lose Jack. Changing their ways proves more difficult than the parents thought, as things do not go as planned, risks are taken, and, well, Jack . . . he ends up in a tree.

Poor Jack, now he is in Africa, stuck up a tree, while his parents—yet to realize Jack flew out of the rented Jeep—are trying to find the guide for their new camp. Jack must protect himself from animals on the ground and the ones that can get past the fence he built around the tree. He sleeps in the tree, eats in the tree, and fears for his life—and the life of Mack, Diana’s stuffed monkey—in the tree. The last time his parents had a get-rich-quick scheme, Jack feared for his life on a deserted island. (#1 – Jack the Castaway reviewed here).

The Berenson Schemes is a wonderful series, especially for kids that wish they could take control. With roles reversed, Jack acts more the parent, setting rules and following through. Meanwhile, Jack’s parents act more like spoiled, unruly children, who care about themselves first and Jack second. They do love their son, but cannot get it together as adults. In book #2, Jack and the Wild Life, the family has new decision-making rules in the hopes that Jack’s parents will be parents that are more responsible. As Jack makes a tree-bed out of duct tape and reads his Kenya guide, he thinks maybe the rules are not working as he had hoped they would.

The Berenson Schemes is a wonderful series, especially for kids that wish they could take control. With roles reversed, Jack acts more the parent, setting rules and following through. Meanwhile, Jack’s parents act more like spoiled, unruly children, who care about themselves first and Jack second. They do love their son, but cannot get it together as adults. In book #2, Jack and the Wild Life, the family has new decision-making rules in the hopes that Jack’s parents will be parents that are more responsible. As Jack makes a tree-bed out of duct tape and reads his Kenya guide, he thinks maybe the rules are not working as he had hoped they would.

I love the black and white illustrations. Stevanovic does a great a job of enhancing the story, giving readers a view into Jack’s situation and his emotions. I wish I had more images to show readers. The full-page illustrations are fantastic and have been in both books. By the end of the story, Jack’s parents may see the errors of their ways and promise Jack they will try harder to change . . . until the next edition, when they tire of being adults, devise a new scheme, and hook Jack into their plans. The Berenson Schemes #2: Jack and the Wild Life is great fun and I look forward to each new scheme and Jack’s consequences for merely being his parents’ child. Kids will love the mayhem Doan creates and the magic in Stevanovic’s illustrations. Book #3: Jack at the Helm, released this past March, 2015.

JACK AND THE WILD LIFE (THE BERENSON SCHEMES #2). Text copyright © 2014 by Lisa Doan. Illustrations copyright © 2014 by Lerner Publishing Group, Inc. Reproduced by permission of the publisher, Darby Creek, Minneapolis, MN.

Purchase Jack and the Wild Life at Amazon—Book Depository—iTunes—Darby Creek.

Learn more about Jack and the Wild Life HERE.

CCSS Guide for Teachers HERE.

Meet the author, Lisa Doan, at her website: http://www.lisadoan.org/

Meet the illustrator, Ivica Stevanovic, at his website: http://ivicastevanovicart.blogspot.com/

Find more middle grade books at the Darby Creek website: http://bit.ly/DarbyCreek

Darby Creek is a division of Lerner Publishing Group, Inc.

The Berenson Schemes

#01 – Jack and the Castaway – 2015 IPPY Gold Medalist for Juvenile fiction

x

x

.

Copyright © 2015 by Sue Morris/Kid Lit Reviews. All Rights Reserved

Review section word count = 518

The more money you make, the more you lose. That is the story of Africa over the past two decades. Indeed, along with the impressive record of economic growth acceleration spurred by booming primary commodity exports, Africa continent has experienced a parallel explosion of capital flight.

The post Capital flight from Africa and financing for development in the post-2015 era appeared first on OUPblog.

May Contain Spoilers

Review:

I didn’t read any further in the blurb than “elephant research and rescue camp” before I added The Promise of Rain to my TBR. Imagine my delight when the library actually acquired a copy so soon after the release date! It’s one of the first novels in the Harlequin Heartwarming line that I’ve read, and while I enjoyed the story, I have mixed feelings about certain aspects of it.

Anna Bekker’s life revolves around two things: her four year old daughter, Pippa, and the elephants she’s studying. When the head of the research department back in the States starts exerting pressure on her about expenses and results, she knows that her funding is in danger. When she’s told someone will be visiting the camp to audit the books, the last person she expects is Jackson Harper, her former best friend and the love of her life. He’s also Pippa’s father, a fact that she’s kept secret from him. Jack is beyond pissed that he’s been kept in the dark about his daughter, and he thinks a wildlife camp in the middle of the Serengeti is the last place she belongs. It’s dangerous! There are wild animals! Snakes! GERMS! Yes, Jack is a germaphobe, but that’s not the biggest reason I couldn’t connect with him. He’s also manipulative, emotionally stunted, and clueless. So, yeah, I didn’t much care for Jack.

Anna, on the other hand, I loved. She’s dedicated to her daughter and to the elephants she’s researching, and the thought of losing her funding is keeping her up nights, sleepless and worried. Having her future rest in Jack’s hands is galling, especially when he’s so angry with her about Pippa. When it turns out that he’s keeping quiet about a conflict of interest regarding her funding, she thinks the chasm between them can’t get any wider. Then Jack threatens to fight for Pippa’s custody, and she realizes just how wrong she was.

The romance didn’t work for me. Jack is too anal and too uptight, and if there was any chemistry between Jack and Anna, I didn’t see it. While they both have trust issues, Jack just didn’t seem like he would ever be capable of being the kind of partner Anna needed. If I hadn’t liked Anna, the elephants, and the secondary characters so much, The Promise of Rain might have been a DFN for me. Instead, I loved the details of Anna’s work and the descriptions of the camp and the wildlife preserve. The romance, unfortunately, fell flat for me.

Grade: C+

Review copy obtained from my local library

From Amazon:

He wants to take her child out of Africa…

The Busara elephant research and rescue camp on Kenya’s Serengeti is Anna Bekker’s life’s work. And it’s the last place she thought she’d run into Dr. Jackson Harper. As soon as he sets eyes on her four-year-old, Pippa, Anna knows he’ll never leave…without his daughter.

Furious doesn’t begin to describe how Jack feels. How could Anna keep this from him? He has to get his child back to the States. Yet as angry as he is with Anna, they still have a bond. But can it endure, despite the ocean—and the little girl—between them?

The post Review: The Promise of Rain by Rula Sinara appeared first on Manga Maniac Cafe.

Add a Comment

Although the number of Ebola cases and deaths has jumped dramatically in the short time since we wrote our December Briefing on the epidemic, there are signs of hope. Ebola is slowing down in areas where there was previously high transmission, in Liberia and in Eastern Sierra Leone for example. The lesson from past Ebola epidemics is that learning and local adaptation has played a central role in controlling previous outbreaks; now in West Africa the curve of the epidemic seems to be turning as people alter their behaviour. The apparent avoidance of continued exponential growth is a relief but it is no cause for complacency.

Freetown and the North of Sierra Leone are still suffering heavily. There is likely to be ongoing transmission for some time with sporadic clusters of cases as the epidemic moves into its next phase. The message, that local people should be involved and that their perspectives and knowledge are both valid and valuable, is still essential. Now is the time to find a balance between medical interventions, emergency thinking, and more humane and localised approaches based on collaboration.

As and when the epidemic ends, there should also be no complacency about the structural violence which produced this crisis. Structural violence refers to the way institutions and practices inflict avoidable harm by impairing basic human needs. The long term view — which locates this epidemic in the context of economic, social, technical, discursive and political exclusions and injustices — needs to be at the forefront of recovery and ‘development’ post-Ebola. The stark evidence of violence, in the form of distrust, the collapse of already dysfunctional health services, the catastrophic costs of Ebola on families and countries, the unpaid salaries of nurses and burial teams, the lack of protection – whether in the form of plastic gloves or welfare nets in times of crisis – must not fade with a return to business as usual. The Ebola crisis should be a game-changer for development.

In pointing to structural violence, we aren’t talking of a single social institution, but of overlapping institutions and practices that have produced interlocking inequalities, unsustainabilities, and insecurities. Aid and development have failed to address these conditions. Sierra Leone and Liberia attract considerable foreign direct investment and record some of the world’s highest growth figures yet most of their populations live in continued or worsening poverty. The emerging field of global health emphasizes networks and shared vulnerabilities, but in practice — through disjointed programmes and a tendency towards ‘quick wins’ — has neglected dire inequalities, which mean a virus like Ebola can tear a country up due to an absence of the most fundamental public health and state capacities. These structural and related socio-cultural conditions are not quickly or easily addressed, but Ebola has highlighted how vast disparities, internationally and within countries, are not sustainable. A greater focus on inclusive institutions and economies, and on conceiving of health as a global public good, is needed in order to build trust and resilience. Achieving that will involve asking difficult questions about aid and development as practiced in this region.

Both the crisis response and efforts to address its structural underpinnings are strengthened by recognition of the complex and historically-embedded logics and relationships which shape people’s lives. The Ebola Response Anthropology Platform has been set up to network anthropologists and other social scientists across the world with fieldworkers and communities, and to provide an interface with those planning and implementing the Ebola response so that such perspectives can be integrated into the response. Complementary initiatives, like one supported by the American Anthropological Association, mean that there is now a groundswell of debate and commentary on these critical dimensions. Much of this is building on research conducted over decades of post-colonial development and post-conflict reconstruction that, with the benefit of hindsight, is revealing of the fault-lines of the Ebola epidemic. As ‘the response’ transitions into another phase of reconstruction it is critical that these lessons, and the complexities they reveal, are fully appreciated to prevent further disasters for this region.

Headline image credit: Conakry, Guinea, 2011. Photo by CDC Global. CC BY 2.0 via Flickr.

The post Ebola: the epidemic’s next phase appeared first on OUPblog.

Monica Edinger, author of “Africa is My Home, A Child of the Amistad,” is a former Peace Corps volunteer who began writing children’s books during Sierra Leone’s Civil War. “Sierra Leone and its people were being represented in the media in this really horrendous way,” Edinger said.

She felt it was important to share stories that showed there was more to Sierra Leone than conflict. “Real stories, about real people, make a big difference. But unfortunately that isn’t the standard narrative in children’s books.”

From this article celebrating the Children’s Africana Book Awards.

On this day in 1984, musical aficionados from the worlds of pop and rock came together to record the iconic ‘Do They Know It’s Christmas?’ single for Band Aid. The single has gone down in history as an example of the power of music to help right the wrongs in the world. The song leapt to the number one spot over the Christmas of 1984, selling over a million copies in under a week and totalling sales of three million by the end of that year. The Band Aid super-group featured the cream of eighties pop, including David Bowie, Phil Collins, George Michael, Sting, Cliff Richard and Paul McCartney.

The sales target for the single was £70,000, all of which was to be donated to the African famine relief fund. With support from Radio 1 DJs and a Top of the Pops Christmas Special, sales sky-rocketed and Geldof, feeling the strength of public opinion behind him, went toe-to-toe with the conservative government in an attempt to have tax on the single waived. Margaret Thatcher initially refused the plea, but as public outcry grew, Thatcher caved-in to public demands and the tax on sales worth nearly £9 million was donated back to charity.

Bob Geldof and a host of artists old and new have re-recorded the single to help raise funds to stem the Ebola crisis. Our infographic marks the 30th anniversary of the original recording and illustrates the movers and shakers that made this monumental milestone in pop history possible.

To view free articles examining the cause, the people, and the music, you can open the graphic as a PDF.

Headline image credit: Live Aid at JFK Stadium, Philadelphia, 1985. CC BY-SA 3.0 via Wikimedia Commons.

The post Band Aid (an infographic) appeared first on OUPblog.